Professional GPUs in servers are positioned as devices for high-performance computing, artificial intelligence systems and rendering farms for 3D graphics. Should they be used for encoding, or is this just overkill? Let's take a closer look.

If you’re working with multi-threaded video, the power of modern CPUs and solutions like Intel Quick Sync are enough. Moreover, some experts believe that using professional GPUs for decoding and encoding is a waste of resources. The number of incoming streams is specifically limited to two or three on consumer video cards, although we have already seen that a little voodoo with the driver allows you to get around this limitation. In the previous article we tested consumer video cards, and now we will deal with a more serious contender - the NVIDIA RTX A4000.

Getting ready for testing

What if the lscpu output gives you something along the lines of an AMD Ryzen 9 5950X 16-Core Processor, but you have an NVIDIA RTX A4000 with 16GB of RAM plugged into your computer and you want to transcode and record multiple network cameras? Their feeds usually comes in via http, rtp or rtsp, and our task is to catch these streams, transcode them into the required format and write each one to a separate file.

For the test, we at HOSTKEY created a small test bench with the above CPU/GPU configuration without any special optimizations and 32 GB of RAM. On it, through ffmpeg, we will receive multicast broadcasts in http and rtsp formats (we used this video file, bbb_sunflower_1080p_30fps_normal.mp4, from the Blender demo repository), decode it in a different number of ffmpeg streams and record each of them in a separate file. As the name suggests, we are streaming in 1080p (30 frames per second). Encoding will only be applied to the video, as the audio streams will go unchanged.

It doesn’t really matter whether we take one incoming stream and simulate its multithreading or process several streams in parallel. Networking and current processes on the test bench take up less than 1% of CPU resources, so we can assume that encoding will provide the main load on the processor and disk subsystem.

All further description is going to be about the http broadcast, as the results for the rtsp stream turned out to be comparable. So as not to produce a lot of terminal consoles on the server, simple bash scripts were created for the test, into which the required number of ffmpeg instances are transferred at startup, transcoding the video stream into h264.

Encoding on a bare CPU:

#!/bin/bash

for (( i=0; i<$1; i++ )) do

ffmpeg -i http://XXX.XXX.XXX.XXX:5454/ -an -vcodec h264 -y Output-File-$i.mp4 &

done

For the GPU, we will use the capabilities of the video card through NVENC (we discussed how to build ffmpeg with its support in the first article of the series):

#!/bin/bash

for (( i=0; i<$1; i++ )) do

ffmpeg -i http://XXX.XXX.XXX.XXX:5454/ -an -vcodec h264_nvenc -y Output-File-$i.mp4 &

done

The scripts run in a multicast loop and snare our rabbit. You should first check through the same vlc or ffplay that the stream is actually being broadcast over. We will evaluate the result by CPU / GPU load, memory utilization and the quality of the recorded video, where the main parameters for our purposes will be the following: fps (it must be stable and not fall below 30 frames per second) and speed (shows whether we have time to process video on the go). For real-time, the speed parameter must be greater than 1.00x.

Subsidence of these two parameters leads to dropped frames, artifacts, encoding problems and other image damage that you would not want to see on CCTV footage.

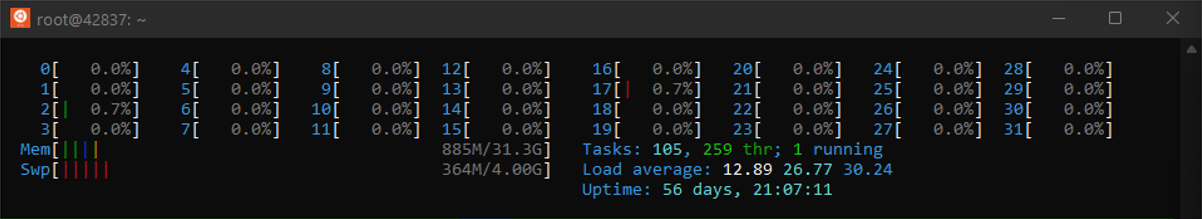

Checking encoding on a bare CPU

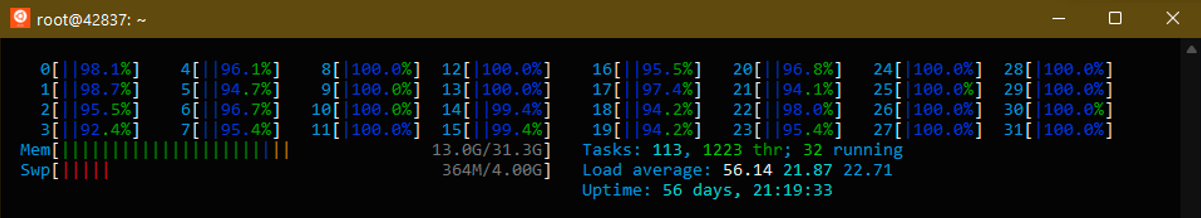

Running one copy of ffmpeg gives us this initial picture:

CPU usage averages 18-20% per core, and the ffmpeg output shows the following:

frame=196 fps=87 q=-1.0 Lsize=2685kB time=00:00:06.43 bitrate=3419.0kbits/s speed=2.84x

There is a lot in reserve, so we can try three streams at once:

frame=310 fps=54 q=29.0 size=4608kB time=00:00:07.63 bitrate=4945.3kbits/s speed=1.33x

Four threads take up almost all the resources of the CPU and eat up 13 GB of RAM.

That being said, the ffmpeg output shows that it can handle a little more:

frame=332 fps=49 q=29.0 size=3072kB time=00:00:08.36 bitrate=3007.9kbits/s speed=1.23x

We increase the number of threads to five. The processor runs at its limit, and in some places the frame rate and bitrate drops by 5–10%:

frame=491 fps=37 q=29.0 size=4864kB time=00:00:13.66 bitrate=2915.6kbits/s speed=1.03x

Running six threads shows that the limit has indeed been reached. We are getting more and more behind real time and we are starting to drop frames:

frame=140 fps=23 q=29.0 size=1024kB time=00:00:01.96 bitrate=2954.4kbits/s speed=0.446x

Testing the might of the GPU

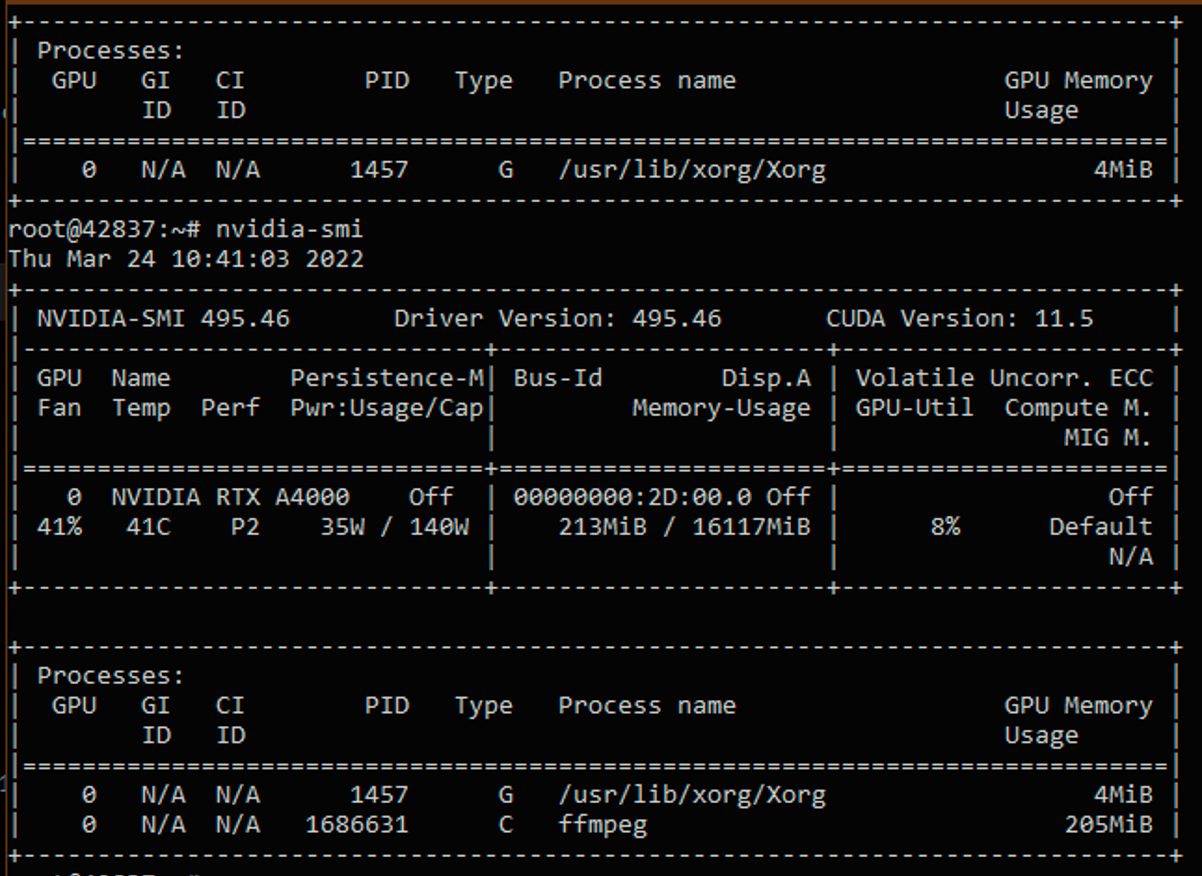

We start with one ffmpeg stream with encoding through h264_nvenc. We make sure through the output of the nvidia-smi that we actually have the video card involved:

As the output is quite cumbersome, we will monitor the GPU parameters with the following command:

nvidia-smi dmon -s pucm

Let's decipher the abbreviations:

- • pwr — power consumed by the video card in watts;

- • gtemp — video core temperature (in degrees Celsius);

- • sm — SM, mem — memory, enc — encoder, dec — decoder (use of their resources is given as a percentage);

- • mclk — current memory frequency (in MHz), pclk — current processor frequency (in MHz);

- • fb — frame buffer usage (in MB).

| gpu | pwr | gtemp | mtemp | sm | mem | enc | dec | mclk | pclk | fb | bar1 |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Idx | W | C | C | % | % | % | % | MHz | MHz | MB | MB |

| 0 | 35 | 48 | - | 1 | 0 | 6 | 0 | 6500 | 1560 | 213 | 5 |

| gpu | Idx | 0 |

| pwr | W | 35 |

| gtemp | C | 48 |

| mtemp | C | - |

| sm | % | 1 |

| mem | % | 0 |

| enc | % | 6 |

| dec | % | 0 |

| mclk | MHz | 6500 |

| pclk | MHz | 1560 |

| fb | MB | 213 |

| bar1 | MB | 5 |

In this output, we will be interested in the values of GPU encoder load and video memory use.

The ffmpeg output gives the following results:

frame=192 fps=96 q=23.0 Lsize=1575kB time=00:00:06.36 bitrate=2027.1kbits/s speed=3.17x

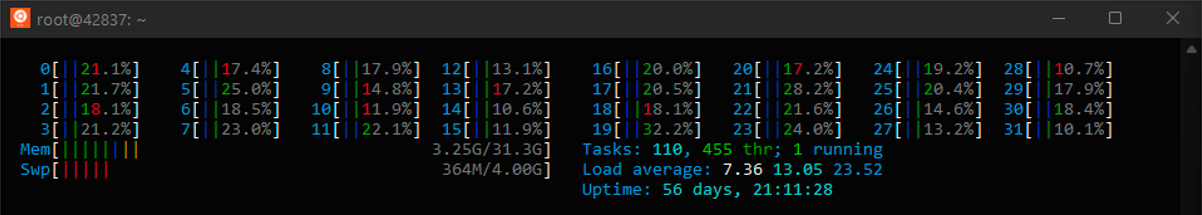

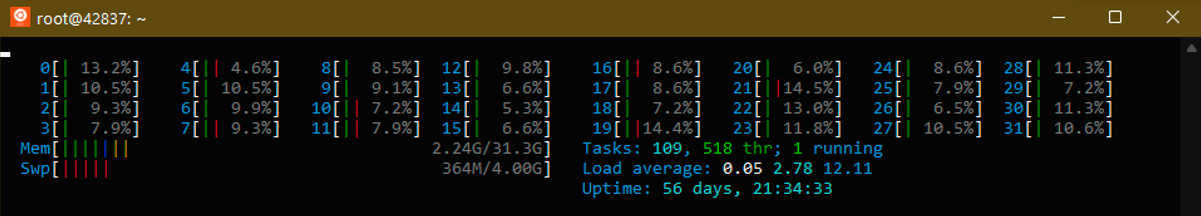

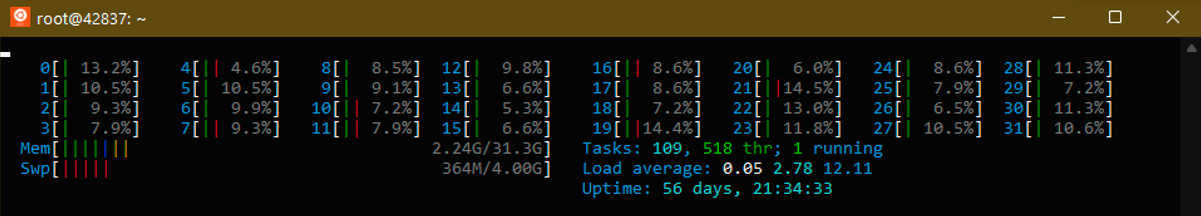

We launch five streams at once. As can be seen from the htop output, in the case of GPU encoding, the CPU load is minimal, and most of the work falls on the video card. The disk subsystem is also loaded much less.

| gpu | pwr | gtemp | mtemp | sm | mem | enc | dec | mclk | pclk | fb | bar1 |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Idx | W | C | C | % | % | % | % | MHz | MHz | MB | MB |

| 0 | 36 | 48 | - | 8 | 2 | 40 | 0 | 6500 | 1560 | 1035 | 14 |

| gpu | Idx | 0 |

| pwr | W | 36 |

| gtemp | C | 48 |

| mtemp | C | - |

| sm | % | 8 |

| mem | % | 2 |

| enc | % | 40 |

| dec | % | 0 |

| mclk | MHz | 6500 |

| pclk | MHz | 1560 |

| fb | MB | 1035 |

| bar1 | MB | 14 |

The load on the encoding blocks has increased to 40%, we have taken up almost a gigabyte of memory, but the video card is actually not heavily loaded. The ffmpeg output confirms this, showing that we have the resources to increase the number of threads by at least 2x:

frame=239 fps=67 q=36.0 Lsize=2063kB time=00:00:07.93 bitrate=2130.3kbits/s speed=2.22x

We put on ten streams. CPU usage is at the level of 15-20%.

Graphics Card figures:

| gpu | pwr | gtemp | mtemp | sm | mem | enc | dec | mclk | pclk | fb | bar1 |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Idx | W | C | C | % | % | % | % | MHz | MHz | MB | MB |

| 0 | 55 | 48 | - | 14 | 4 | 61 | 0 | 6500 | 1920 | 2064 | 24 |

| gpu | Idx | 0 |

| pwr | W | 55 |

| gtemp | C | 48 |

| mtemp | C | - |

| sm | % | 14 |

| mem | % | 4 |

| enc | % | 61 |

| dec | % | 0 |

| mclk | MHz | 6500 |

| pclk | MHz | 1920 |

| fb | MB | 2064 |

| bar1 | MB | 24 |

Power consumption has increased, the video card was forced to overclock the video core frequency, but the encoding power and video memory allow for increasing the load. We check the output of ffmpeg to make sure:

frame=1401 fps=36 q=29.0 Lsize=12085kB time=00:00:46.66 bitrate=2121.5kbits/s speed=1.2x

We try to add four more streams and get the load on the encoding blocks to 100%.

| gpu | pwr | gtemp | mtemp | sm | mem | enc | dec | mclk | pclk | fb | bar1 |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Idx | W | C | C | % | % | % | % | MHz | MHz | MB | MB |

| 0 | 68 | 59 | - | 18 | 7 | 100 | 0 | 6500 | 1920 | 2886 | 33 |

| gpu | Idx | 0 |

| pwr | W | 68 |

| gtemp | C | 59 |

| mtemp | C | - |

| sm | % | 18 |

| mem | % | 7 |

| enc | % | 100 |

| dec | % | 0 |

| mclk | MHz | 6500 |

| pclk | MHz | 1920 |

| fb | MB | 2886 |

| bar1 | MB | 33 |

The ffmpeg output confirms that we have reached the limit. CPU usage still does not exceed 20%.

frame=668 fps=31 q=26.0 Lsize=5968kB time=00:00:22.23 bitrate=2199.0kbits/s speed=1.04x

The benchmark 15 threads show that the GPU is starting to fail as the encoders are overloaded, and there is an increase in temperature and power consumption.

| gpu | pwr | gtemp | mtemp | sm | mem | enc | dec | mclk | pclk | fb | bar1 |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Idx | W | C | C | % | % | % | % | MHz | MHz | MB | MB |

| 0 | 70 | 63 | - | 18 | 7 | 100 | 0 | 6500 | 1920 | 3092 | 35 |

| gpu | Idx | 0 |

| pwr | W | 70 |

| gtemp | C | 63 |

| mtemp | C | - |

| sm | % | 18 |

| mem | % | 7 |

| enc | % | 100 |

| dec | % | 0 |

| mclk | MHz | 6500 |

| pclk | MHz | 1920 |

| fb | MB | 3092 |

| bar1 | MB | 35 |

Ffmpeg also confirms that the graphics card is getting stressed. The processing frequency and frame skipping are no longer encouraging:

frame=310 fps=28 q=29.0 size=2560kB time=00:00:10.23 bitrate=2049.4kbits/s speed=0.939x

CPU vs. GPU

Let's summarize: the use of a GPU in such a configuration can be deemed justified because the maximum number of streams processed by the video card is 3 times higher than the capabilities of far from the weakest processors (especially without the support of hardware encoding technologies). On the other hand, we use only a small part of the capabilities of the video adapter. Since the rest of its blocks and video memory are not heavily loaded, the resources of an expensive device are actually being used inefficiently.

Sophisticated readers may point out that we didn't test the work in 2K/4K modes, and we didn't use the capabilities of modern codecs (such as h265 and VP8/9), and also we installed a video adapter based on the previous generation’s architecture in the test bench. The same A5000 should show a better result, so we will check its work in the next article, and then we will dissect Intel Quick Sync.