In this article, we will compare the new product from Nvidia - the GeForce RTX 4090 - with various professional cards from this manufacturer and try to answer the question, "Is it more beneficial to use a new graphics card in workflows or is it still better to use server graphics cards?"

Professional and gaming GPU-cards have a number of significant differences, determined by the purpose of use:

- Scope of application. Server graphics cards are used in ML development, rendering and modeling of complex objects, scientific research, cinematography, and more. Gaming graphics cards are designed for individual use.

- Cooling. The professional card cooling system blows hot air out of the server or workstation. Their cooling turbine is designed for continuous operation. Gaming cards blow air over the cards. They must be installed in special cases with a good ventilation system. Gaming card fans are not designed for long-term operation and fail during long-term, continuous operation.

- Performance and energy efficiency. Professional GPUs allow you to do more computing with less power. This feature largely accounts for the high cost of server video cards.

- Production features. Quality control in the manufacture of professional cards is stricter than in the creation of gaming cards.

- Connectors. Professional cards are not equipped with connectors (HDMI, DVI) for video output - there is only DisplayPort.

- Additional functionality. Not all server GPUs can be used for gaming.

GeForce RTX 4090 Technology Overview

The GeForce RTX 4090 GPU was released at the end of 2022 and represents a continuation of the line of desktop accelerators from NVIDIA, which aroused great interest among players around the world.

The key features of the card are:

- As with the entire GeForce RTX 40 line, the new AD10x GPUs (AD102 in the 4090) are based on the Ada Lovelace architecture and use the 4N process (TSMC).

- Improved performance of ray tracing and machine compute operations on tensor cores.

- The 4N technological process allows them to increase energy efficiency by several percentage points.

- The size of the card (304 by 137 mm, 3 slots) makes it difficult to mount it in both desktop PCs and servers.

- The gaming cooling system often makes it impossible to mount the 4090 in GPU servers.

- Compared to the 3090, the AD102 has 70% more CUDA cores.

- NVIDIA DLSS 3 technology uses motion vector analysis and OFA algorithms.

- The low latency NVIDIA Reflex platform enhances the gaming experience for professional gamers.

- 8th generation NVEnc encoder with AV1 encoding support.

- NVIDIA Broadcast app.

- NVIDIA Studio.

Specifications for NVIDIA RTX A4000, NVIDIA RTX A5000, NVIDIA RTX 3090 and NVIDIA RTX 4090 Graphics Cards

| RTX A4000 | RTX A5000 | RTX 3090 | RTX 4090 | |

|---|---|---|---|---|

| Architecture | Ampere | Ampere | Ampere | Ada Lovelace |

| Technology process | 8 nm | 8 nm | 8 nm | 4N |

| GPU | GA104 | GA102 | GA102 | AD102 |

| Number of transistors (billion) | 17,4 | 28,3 | 28,3 | 76,3 |

| Clock frequency (GHz) | 0,74 | 1.17 | 1,39 | 2,23 |

| Boost Clock (GHz) | 1.56 | 1.70 | 1.70 | 2,52 |

| Memory frequency (MHz) | 1,750 | 2,000 | 1,219 | 1,325 |

| Memory Bandwidth (Gb/s) | 448 | 768 | 936.2 | 1008 |

| GPU memory(Gb) | 16 Gb | 24 | 24 | 24 |

| Memory type | GDDR6 | GDDR6 | GDDR6X | GDDR6X |

| Cache memory (Mb) | 4 | 6 | 6 | 72 |

| ECC memory | yes | yes | no | no |

| CUDA cores | 6 144 | 8192 | 10496 | 16384 |

| Tensor cores | 192 | 256 | 328 | 512 |

| RT cores | 48 | 64 | 82 | 128 |

| Number of texture modules | 192 | 256 | 328 | 512 |

| Maximum power (W) | 140 | 230 | 350 | 450 |

| Computing performance FP16 (half) (teraflops) | 19.2 | 27.8 | 35.6 | 82.6 |

| Computing performance FP32 (float) (teraflops) | 19.2 | 27.8 | 35.6 | Up to 82,6 |

| Computing performance FP64 (double) | 599 gigaflops | 867.8 gigaflops | 556 gigaflops | 1.3 teraflops |

| Theoretical maximum fill rate (gigapixels/s) | 149.8 | 162.7 | 189.8 | 444 |

| Theoretical texture fetch rate (gigatexels/s) | 149.8 | 433.9 | 566 | 1290 |

| Interface | PCI-E 4.0 x16 | PCI-E 4.0 x16 | PCI-E 4.0 x16 | PCI-E 4.0 x16 |

| NVIDIA DLSS | no | no | yes | 3 |

| Nvlink | no | 2-board low-profile configuration (2-slot and 3-slot bridges) | no | no |

| CUDA Support | 8.6 | 8.6 | 8.6 | 8.9 |

| VULKAN Support | 1.3 | 1.3 | 1.2 | 1.3 |

| DirectX | 12 Ultimate | 12 Ultimate | 12 Ultimate | 12 Ultimate |

| Shader Model | 6.6 | 6.6 | 6.7 | 6.7 |

| OpenGL | 4.6 | 4.6 | 4.6 | 4.6 |

| OpenCL | 3.0 | 3.0 | 3.0 | 3.0 |

| Virtual GPU (vGPU) software support | — | NVIDIA Virtual PC (vPC) and Virtual Applications (vApps), NVIDIA RTX vWS, NVIDIA Virtual Compute Server | — | — |

| Cost (€) | 1 500 | 3 000 | 1 600 | from 1 900 |

The new architecture, memory bandwidth and number of tensor cores, DLSS 3 technology and other characteristics of the GeForce RTX 4090 define a wide range of applications for the GPU - not only gaming, but also working with artificial intelligence and complex calculations.

HOSTKEY Testing

Description of the test environment

AMD Ryzen 9 5900 X 12-Core Processor (3.80 GHz)

32 GB DDR4-3200 ECC DDR4 SDRAM 1600 MHz

Microsoft Windows 10 Professional 64-bit

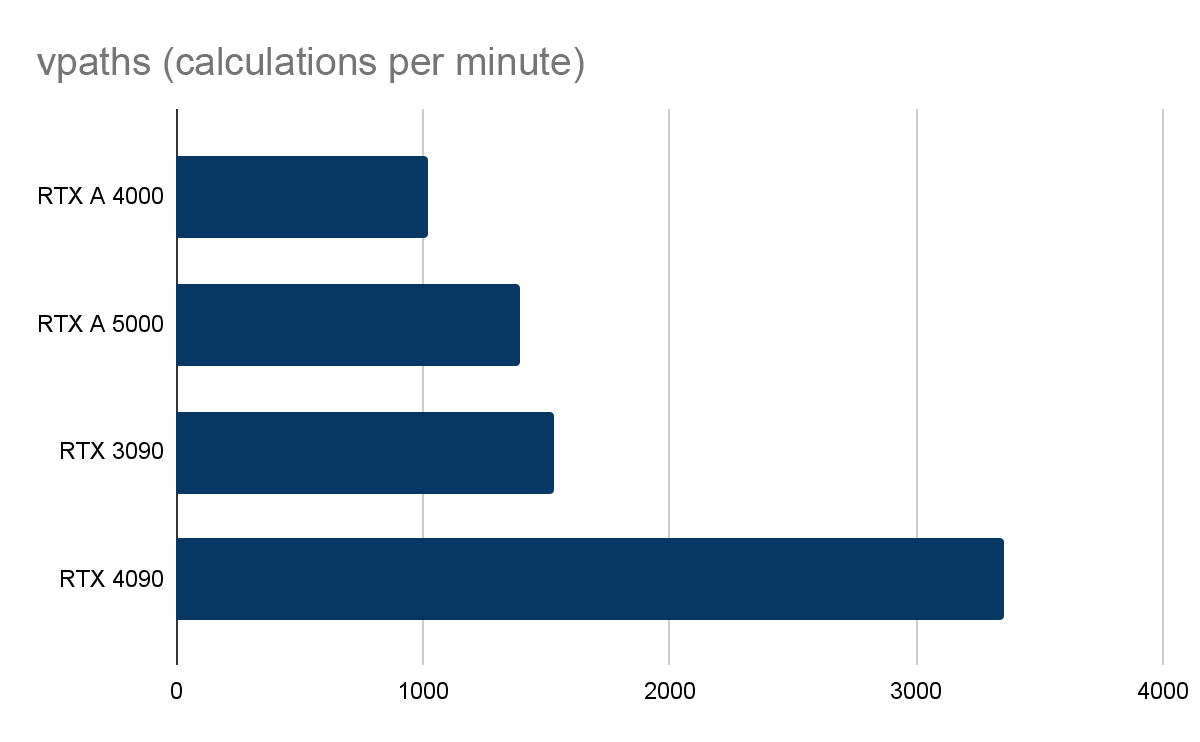

V-Ray GPU CUDA test

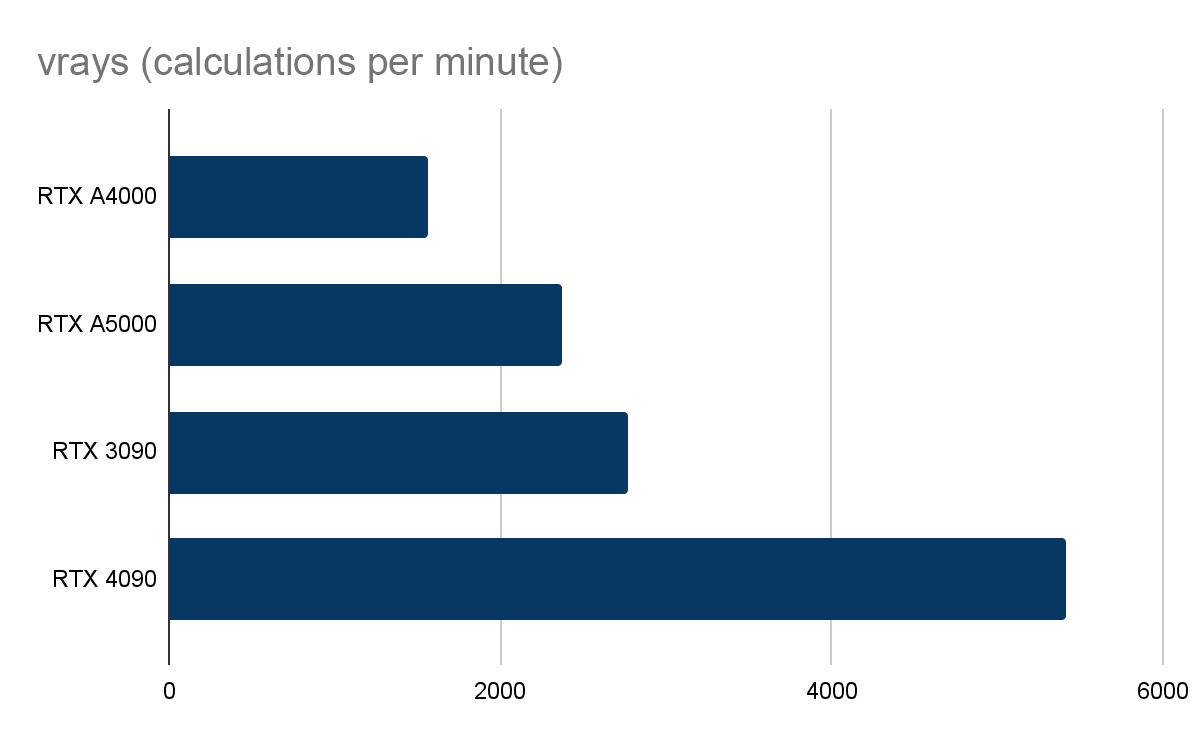

V-Ray GPU RTX Test

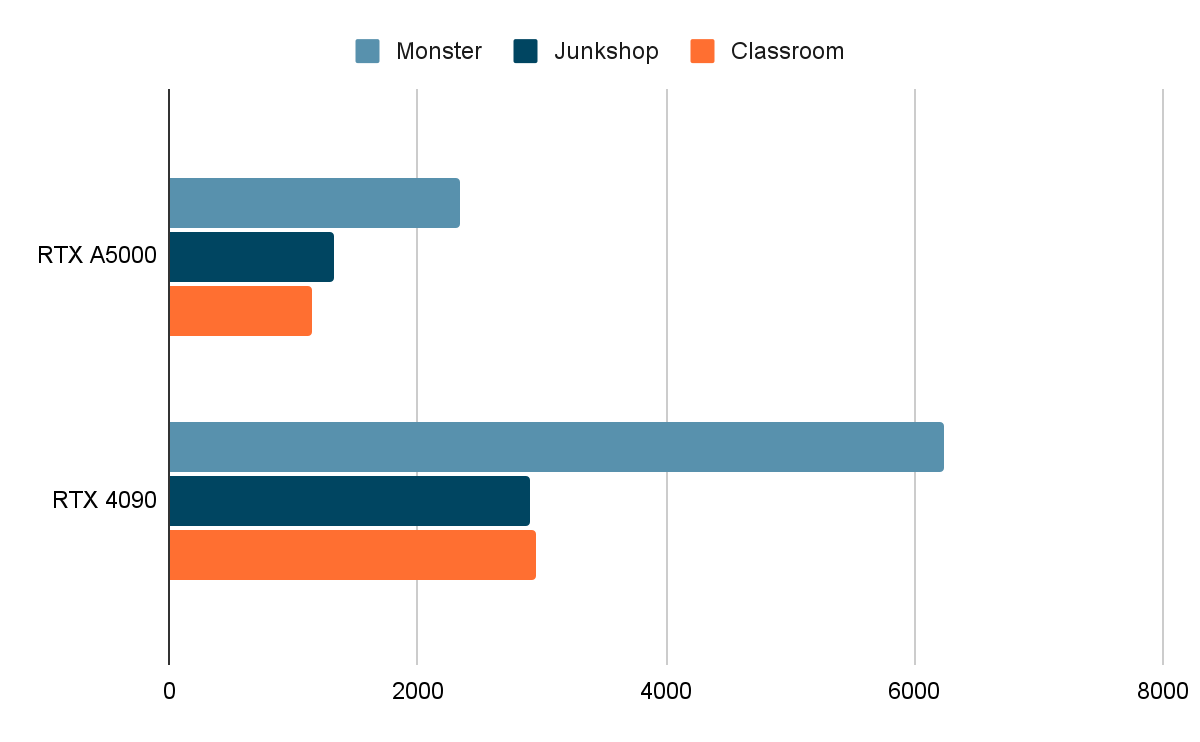

Blender Benchmark

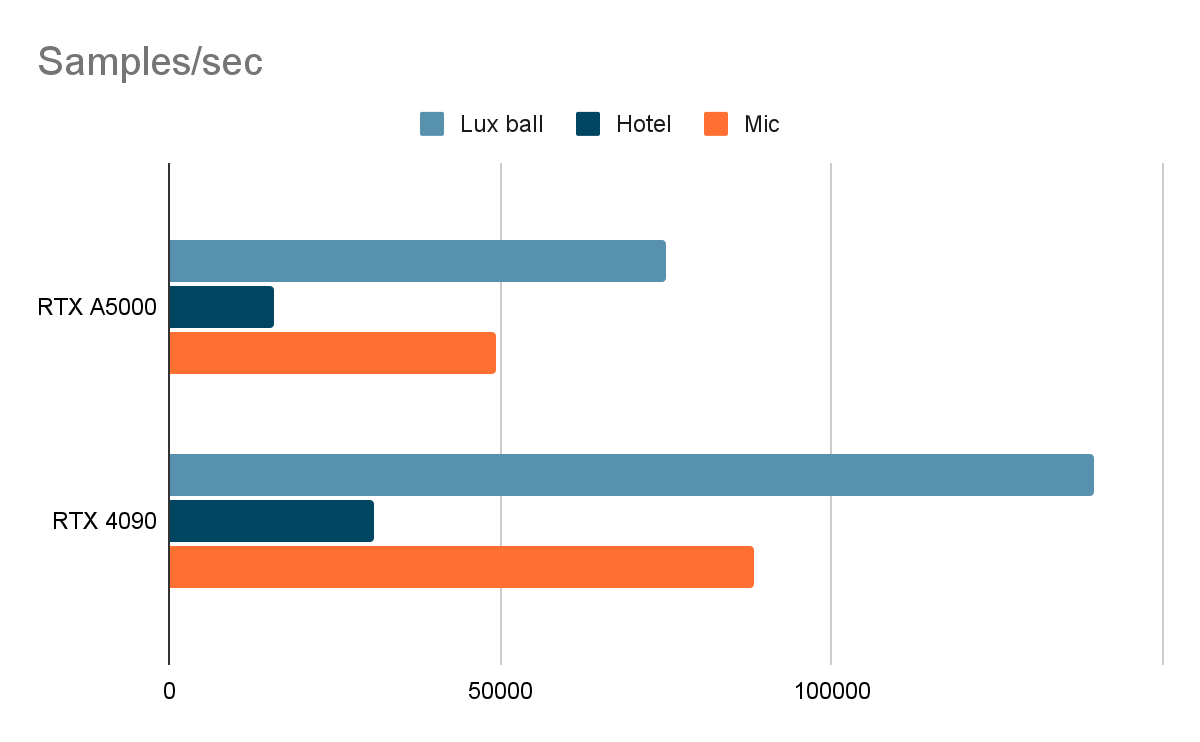

In this test and in LuxMark, we will only compare the RTX A5000 and RTX 4090 cards, as they are the most relevant in the context of this article.

LuxMark

We measured relative GPU performance in rendering. The performance of the GeForce RTX 4090 in the tests looks impressive and almost doubles not only the results of the RTX 3090, but also the professional GPUs. The V-Ray GPU RTX benchmark demonstrates ray-traced GPU performance. The RTX 4090 also outperforms the RTX 3090 by two times.

"Dogs vs. Cats"

To compare GPU performance for neural networks, we used the Dogs vs. Cats dataset. The test analyzes the contents of a photo and distinguishes between a cat or a dog in the photo. All necessary initial data is here. We ran this test on different GPUs and in different cloud services, and we got the following results:

Full cycle of training (min.)

The full training cycle of the test neural network took from 31 to 60 minutes. The result for the GeForce RTX 4090 was 31 minutes and surpassed the performance of all the other GPUs. The most noticeable difference in the results was between the RTX 3090 and RTX 4090 cards: the new generation of GPUs from NVIDIA coped with the calculations almost twice as fast as the previous Nvidia generation.

Tests have shown that the closest competitor to the 4090 card is the A5000. It remains to compare these cards in terms of their price-quality ratio. In all the tests, the new card from Nvidia showed a result that exceeded the RTX A5000 by about two times. At the same time, the cost of the RTX 4090 is much lower: 138,000 rubles (minimum price) compared to 216,000. It would seem that the choice is obvious, but there are nuances. The A5000 GPU consumes significantly less power and can be a cost-effective solution for tasks with a constant high load on the GPU over a long term. The RTX A5000 supports NVLink technology, which is useful when training neural networks. A5000 GPUs have no restrictions on using NVENC/NVDEC for parallel video transcoding tasks. With the purchase of a specialized license, the A5000-class professional GPUs can be virtualized and available in the server as several smaller virtual GPUs. Another problem is Nvidia's ban on using drivers for its gaming cards in data centers and remotely outside the office.

Although NVidia's promotional photo shows a lot of 4090s with large fans and a 3U format, in reality this configuration is almost impossible to buy. In stock there are only large-size gaming cards for 4 units with an increased height and fans blowing out up and down. Such cards cannot be used in servers and in most workstations.

Conclusion

The transition to the new Ada Lovelace architecture has significantly increased the performance of the GeForce RTX 4090. Improved tensor cores and RT cores significantly improve the quality and expand the capabilities of real-time ray tracing. The 24 GB memory capacity allows you to process large amounts of data.

The GeForce RTX 4090 is primarily designed for gaming and is great for various types of computing solutions: AI, data analysis, machine learning. The new architecture greatly outperforms the previous generation of NVIDIA GPUs. An important limitation in the professional use of this video card is its high power consumption and the inability to combine several cards using Nvlink.

An alternative to purchasing a video card is to rent a GPU server. Our calculations show that monthly rentals for the GeForce RTX 4090 and RTX A5000 cards are comparable in price. Accordingly, if you need to perform professional tasks, renting a GeForce RTX 4090 card can be beneficial due to its high performance.