Proxmox 9¶

In this article

- Deployment Features

- Proxmox 9. Installation

- First Login and Basic Check

- Network: Bridge vmbr0

- Disks and Storage

- Loading ISO Images

- Create First VM (Ubuntu 25.10)

- Windows Installation

- LXC Containers: Quick Start

- Typical VM Profiles

- Connecting VMs and LXC in One Network

- Backups and Templates

- Common Problems and Solutions

- Diagnostics: Cheat Sheet

- Mini-FAQ

- Readiness Checklist for System

- Ordering a Server with Proxmox 9 using API

Deployment Features¶

| ID | Name of Software | Compatible OS | VM | BM | VGPU | GPU | Min CPU (Cores) | Min RAM (Gb) | Min HDD/SDD (Gb) | Custom Domain | Active |

|---|---|---|---|---|---|---|---|---|---|---|---|

| 350 | ProxmoxVE 9 community edition | Debian 13 | + | + | + | + | 2 | 2 | - | No | ORDER |

| 25 | ProxmoxVE 7.6 community edition | Debian 11 | + | + | + | + | 2 | 2 | - | No | No |

| 32 | ProxmoxVE 8.x community edition | Debian 12 | + | + | + | + | 2 | 2 | - | No | No |

Proxmox VE 9.0

Proxmox VE 9.0 was released on August 5, 2025, and has significant differences from version 8.x:

Main new features of version 9.0:

- Transition to Debian Trixie;

- Snapshots for virtual machines on LVM storage with thick resource allocation (preview technology);

- High availability (HA) rules for binding nodes and resources;

- Factories for the software-defined network (SDN) stack;

- Modernized mobile web interface;

- ZFS supports adding new devices to existing RAIDZ pools with minimal downtime.

Critical changes in version 9.0:

- The testing repository has been renamed to

pve-test; - Possible changes in the names of network interfaces;

- VirtIO vNIC: default value changed for the MTU field;

- Update to AppArmor 4;

- Privilege

VM.Monitorrevoked; - New privilege

VM.Replicatefor storage replication; - Creating privileged containers requires privileges

Sys.Modify; - Support for configuring

maxfilesfor backup discontinued; - GlusterFS support discontinued;

- systemd registers a warning

System is tainted: unmerged-binafter boot.

If you ordered a server with version 9.0, be sure to familiarize yourself with the detailed developer documentation

Note

Unless otherwise specified, by default we install the latest release version of the software from official repositories.

Proxmox 9. Installation¶

After installing the server, within 15-20 minutes, the installation of the Proxmox VE service is performed. An email will be sent to the mailbox linked to the account, notifying you about the installed server and providing a link in the format https://proxmox<ID_server>.hostkey.in, which you must access to enter the web management interface for Proxmox VE:

- Login -

root; - Password - system password.

Attention

If you are installing Proxmox as an operating system, to access the web interface you need to go to the address http://server_IP:8006.

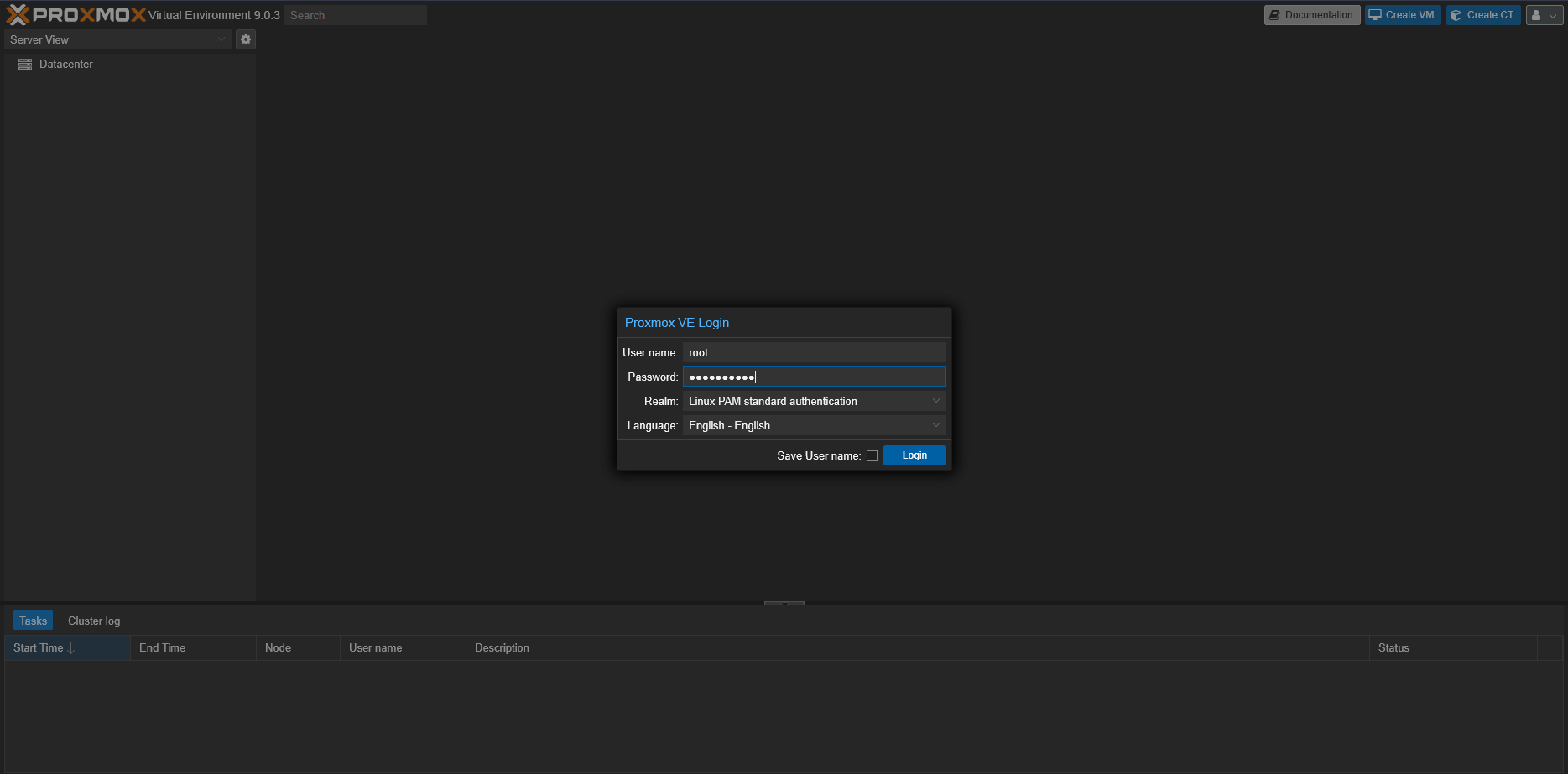

First Login and Basic Check¶

-

Open your browser >

https://<server_IP>:8006and enter the credentials:

-

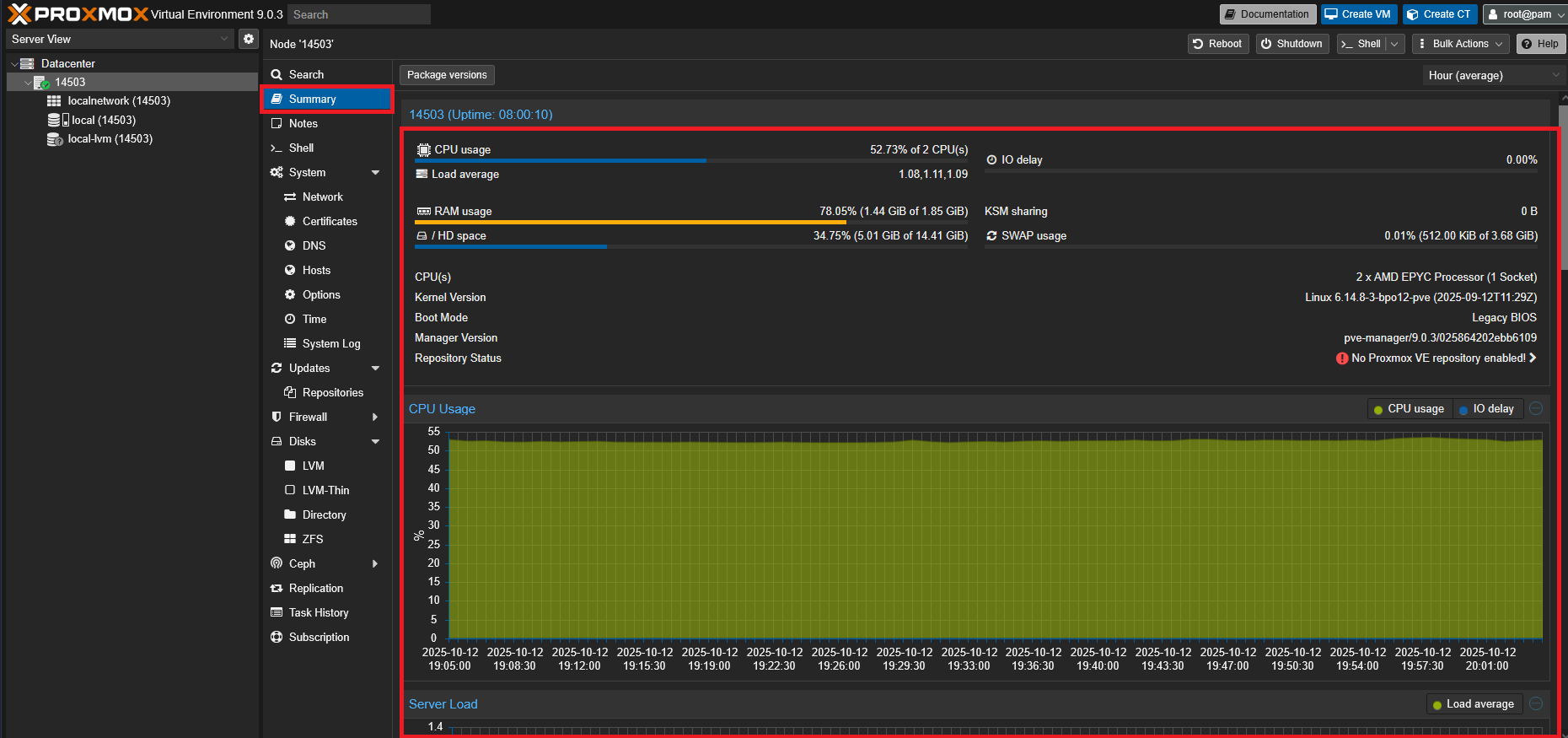

Navigate to: Datacenter > Node > Summary - check CPU, RAM, disks, uptime.

-

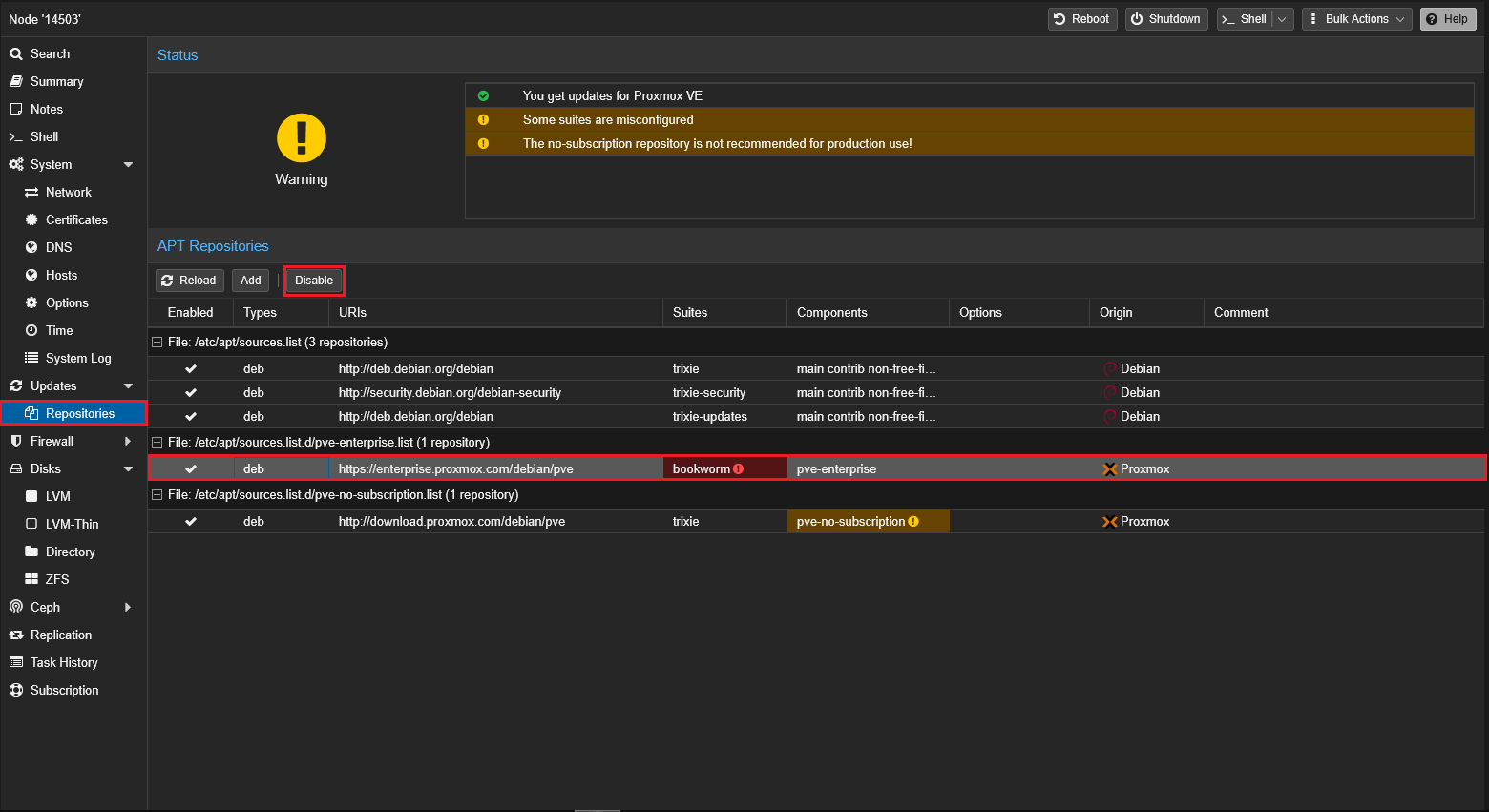

Disable the enterprise repository if there is no subscription:

Node > Repositories > pve-enterprise > Disable. Keep pve-no-subscription:

Terminal Commands:

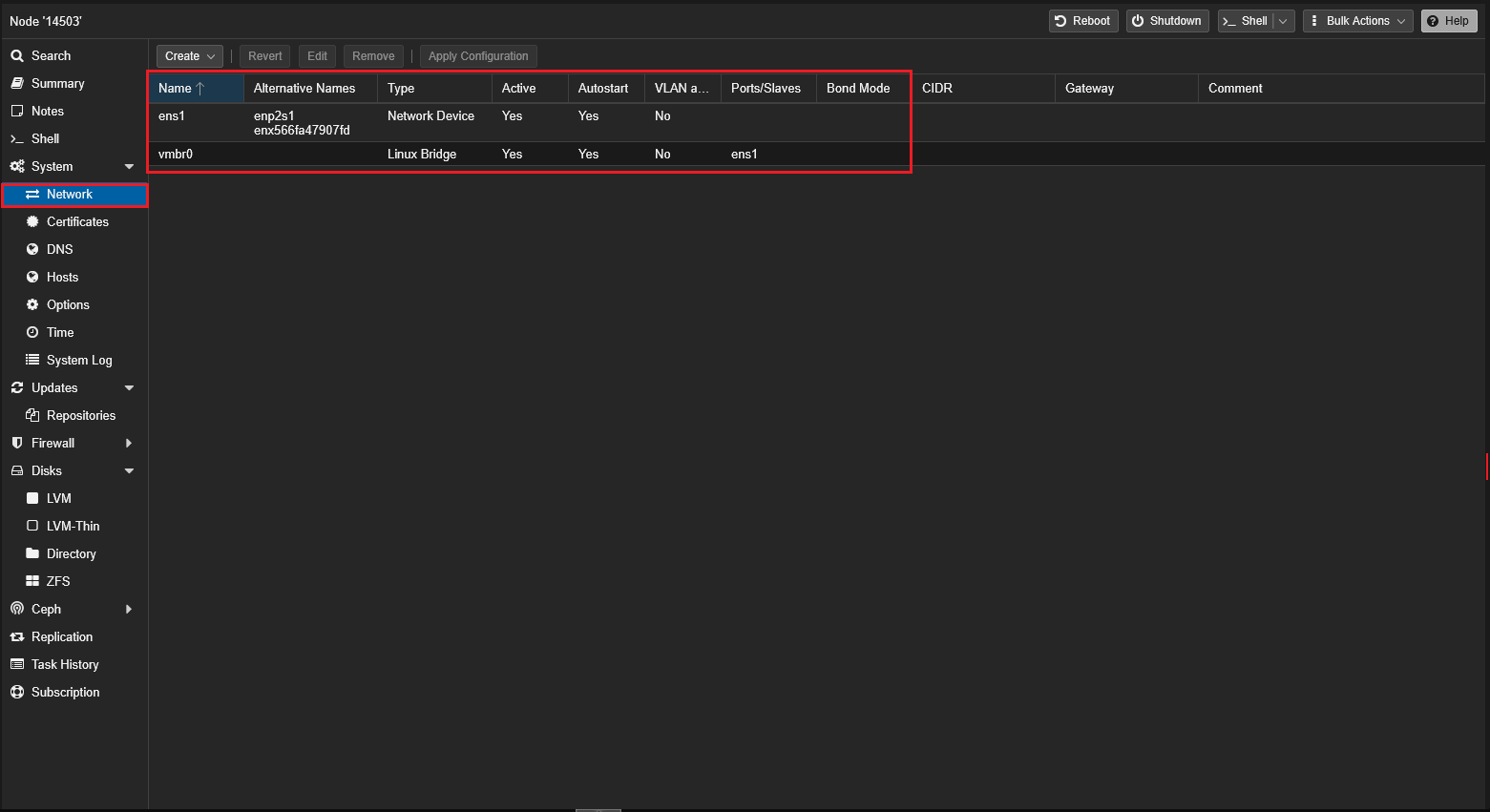

Network: Bridge vmbr0¶

The bridge vmbr0 is a virtual "switch" to which VMs are connected. It is bound to a physical interface (e.g., ens18/eno1).

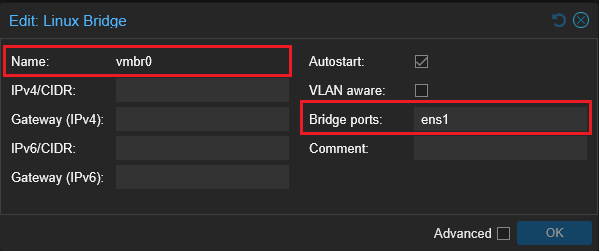

Through the Web Interface¶

-

Node > System > Network.

-

Verify that vmbr0 exists. If it does not exist or is not configured -

Create>Linux Bridge:- Name:

vmbr0 - IPv4/CIDR: specify your static IP in the format X.X.X.X/YY (leave blank if using DHCP);

- Gateway (IPv4): default gateway (usually X.X.X.1) (do not enter if using DHCP);

- Bridge ports: your physical interface, for example

ens1; - Save >

Apply configuration:

- Name:

Through CLI (if web access is lost)¶

Example /etc/network/interfaces (ifupdown2):

auto lo

iface lo inet loopback

auto ens18

iface ens18 inet manual

auto vmbr0

iface vmbr0 inet static

address 192.0.2.10/24

gateway 192.0.2.1

bridge-ports ens18

bridge-stp off

bridge-fd 0

Note

If DHCP addressing is needed for the node: replace the block iface vmbr0 inet static with iface vmbr0 inet dhcp and remove the gateway line.

Common Errors:

- Incorrectly specified bridge-ports (wrong physical interface) > network "disappears". Correct the interface and execute

ifreload -a. - Wrong gateway or subnet entered > local connection exists but no internet access.

Disks and Storage¶

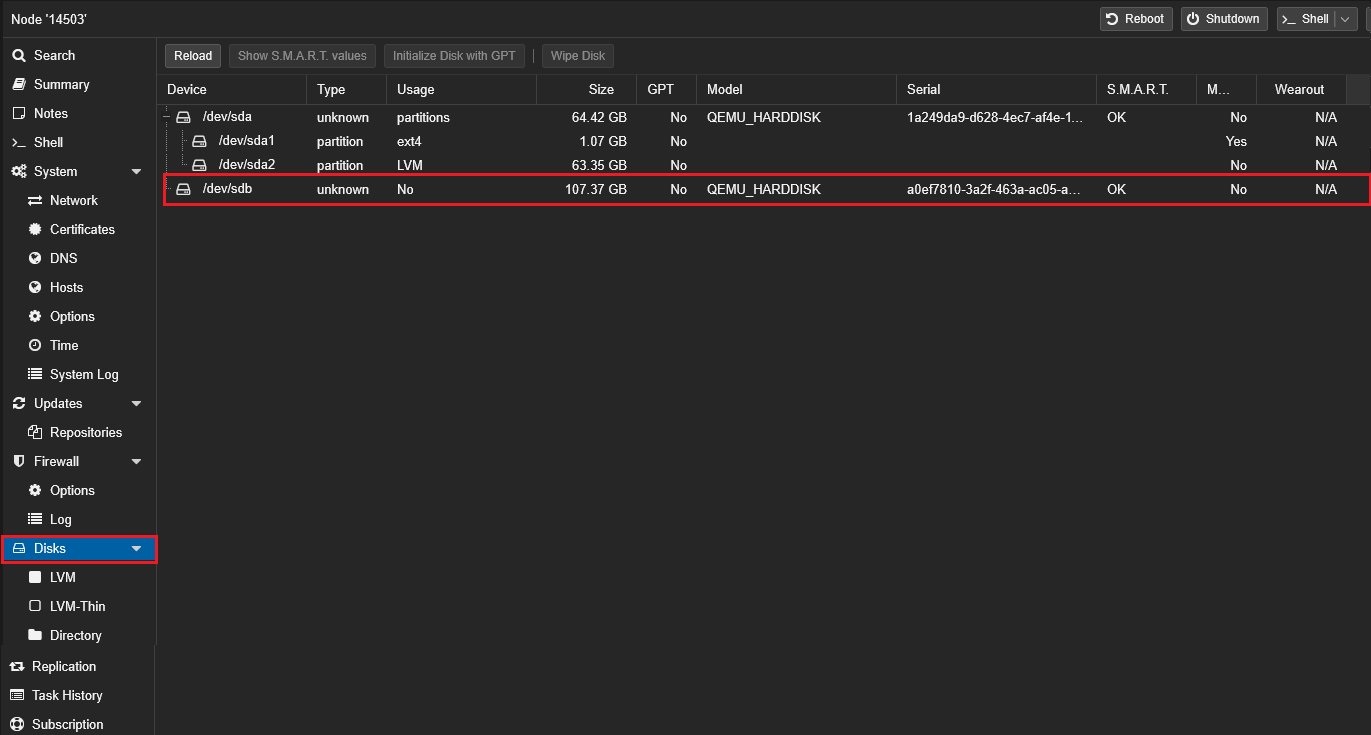

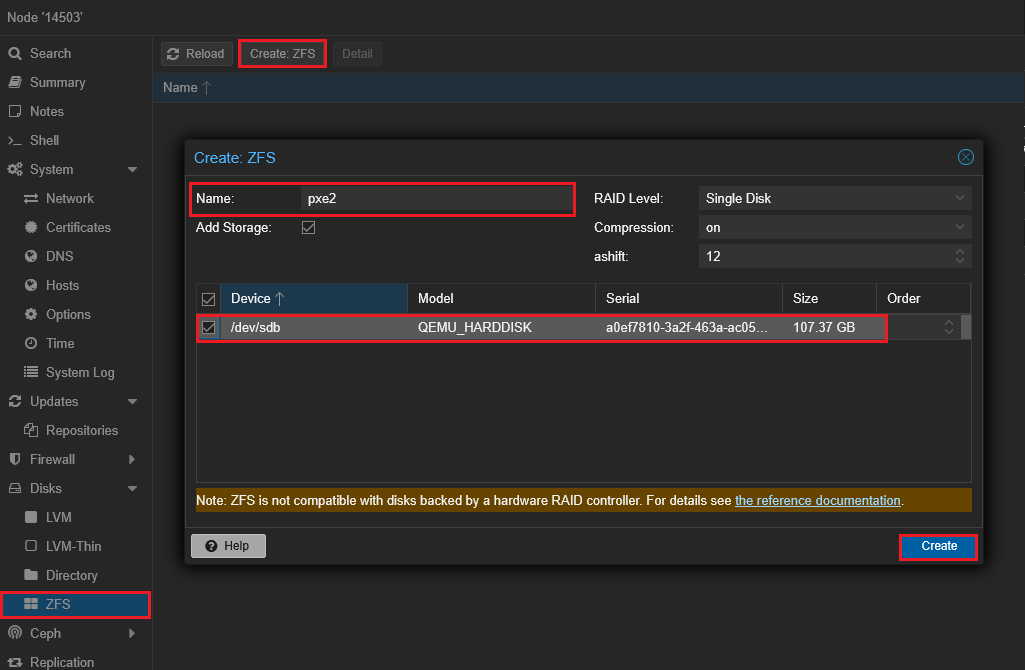

Add a Second Disk for VM Storage¶

-

Node > Disks: ensure that the new disk is visible (e.g., sdb).

-

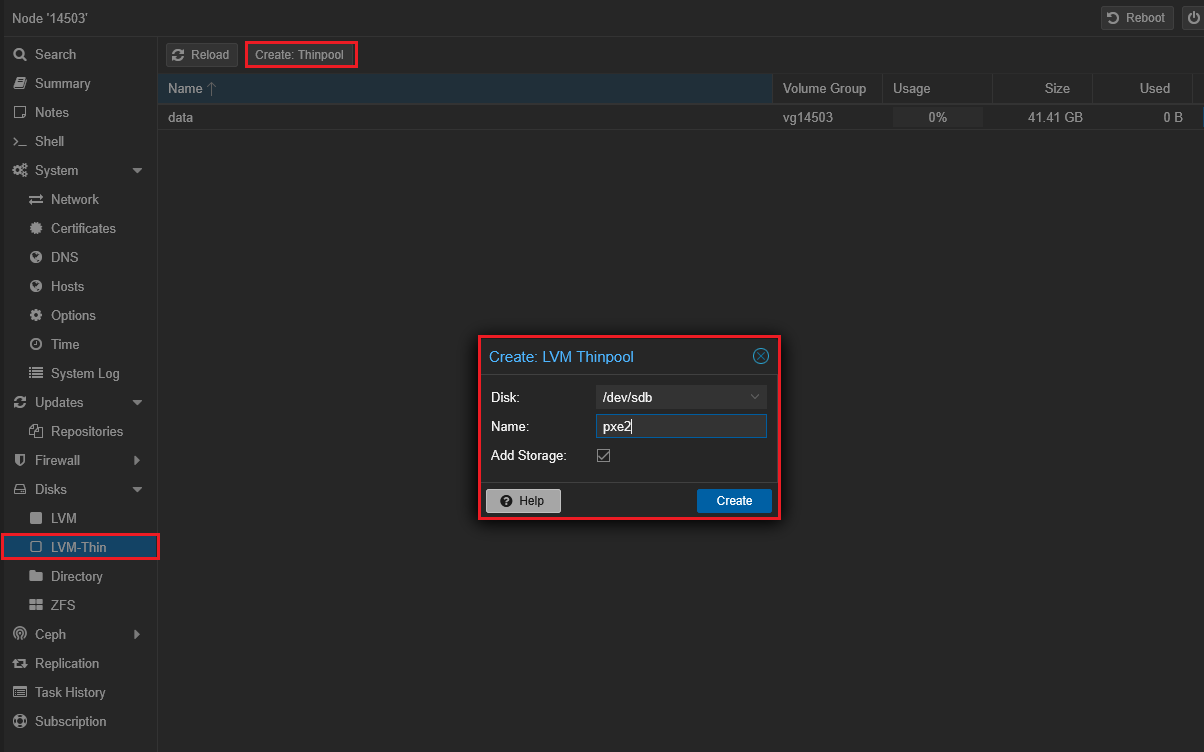

Option A - LVM-Thin (convenient for snapshots):

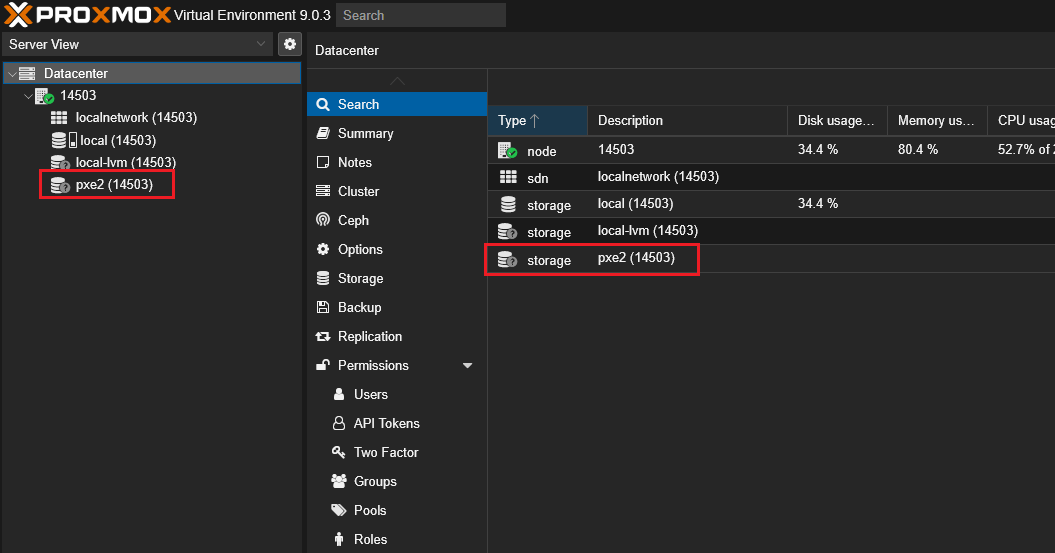

- Disks > LVM-Thin >

Create: select the disk > specify VG name (e.g., pve2) and thin-pool (e.g., data2).

- The storage will appear in Datacenter > Storage.

- Disks > LVM-Thin >

-

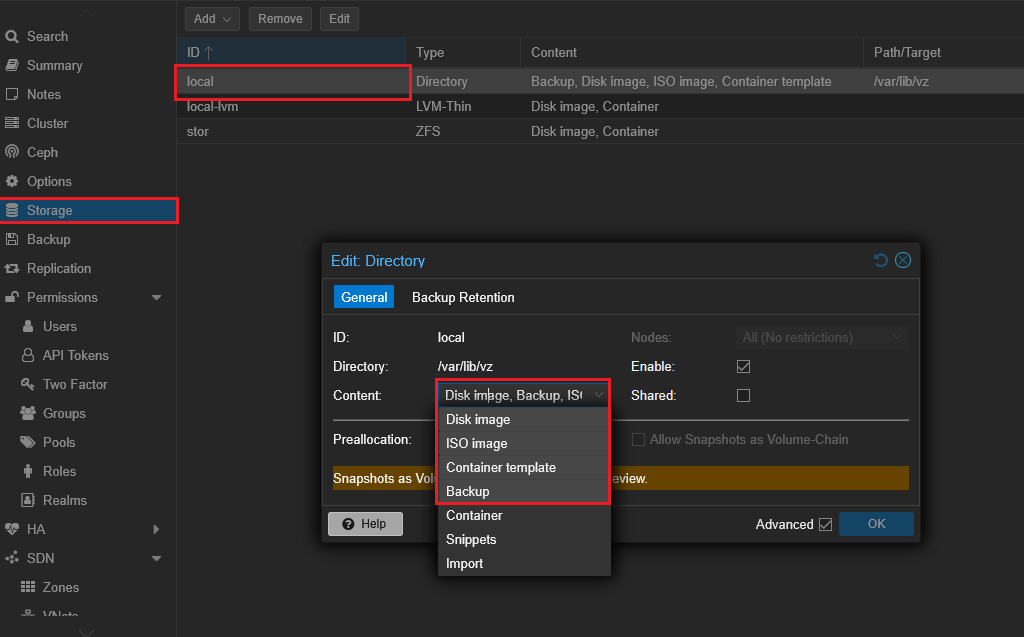

Option B - Directory:

- Create a file system (Disks > ZFS or manually

mkfs.ext4), mount to/mnt/...

- Datacenter > Storage >

Add>Directory> path/mnt/...> enable Disk image, ISO image (as needed).

- Create a file system (Disks > ZFS or manually

Note

For ZFS choose a profile considering RAM (recommended ≥ 8 GB). On weak VDS LVM-Thin or Directory is better.

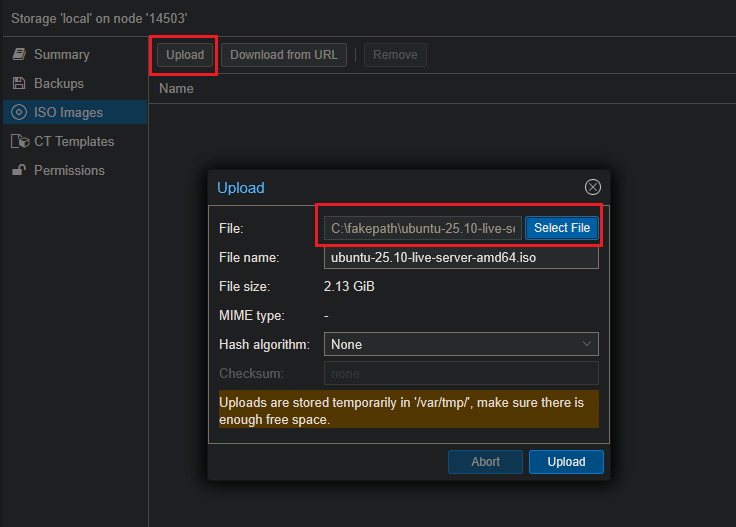

Loading ISO Images¶

ISO images can be loaded in two ways.

A. Through the Web Interface¶

- Datacenter > Storage > (select

storagewith typeISO, e.g.,local) > Content. Upload> select localubuntu-25.10-live-server-amd64.iso> wait for upload completion.

B. Through the Node (CLI)¶

Example of downloading Ubuntu 25.10 ISO to local storage:

.../template/iso folder of the desired storage and that the storage type includes ISO Image. Create First VM (Ubuntu 25.10)¶

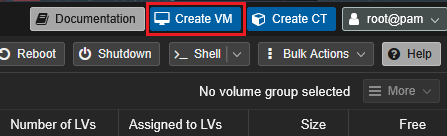

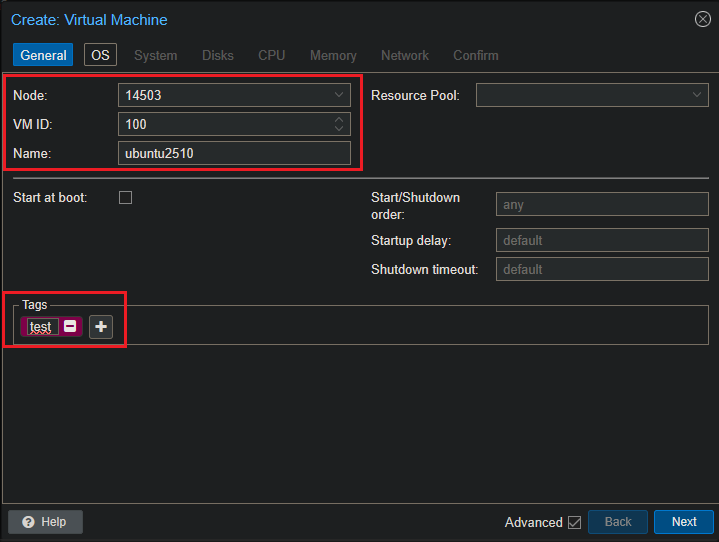

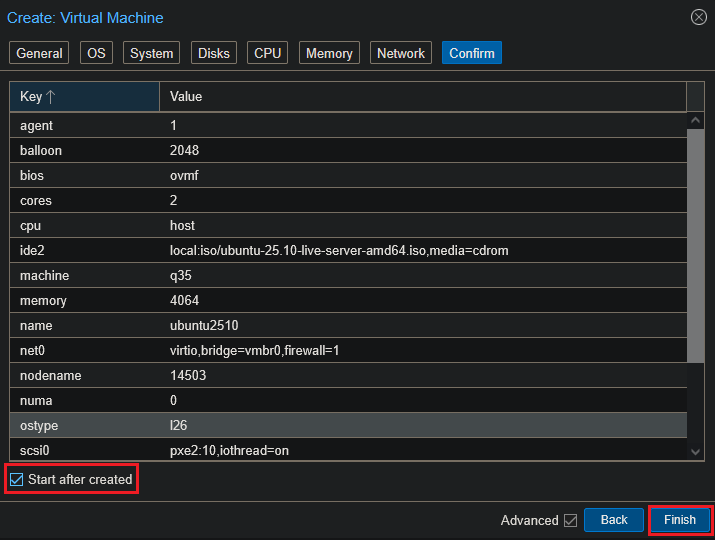

Example: Ubuntu Server 25.10 (VPS with 2 vCPU)

Click Create VM (top right):

General: Leave ID as default, Name - ubuntu2510 (or your own):

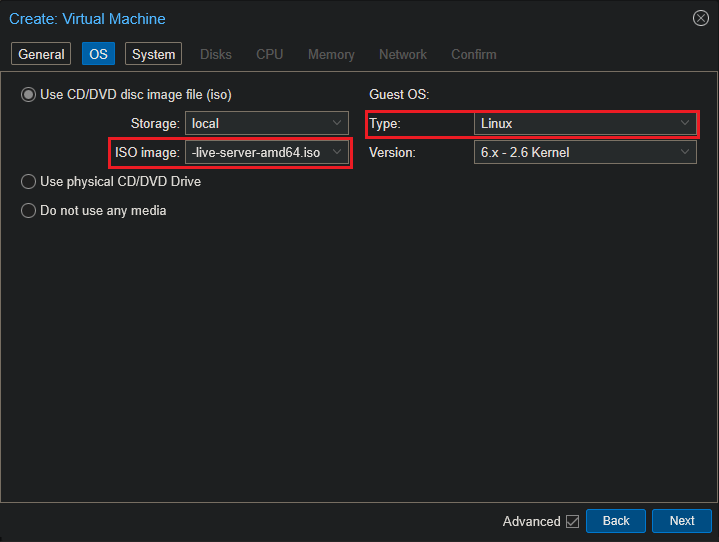

OS: select ISO ubuntu-25.10-live-server-amd64.iso, Type: Linux:

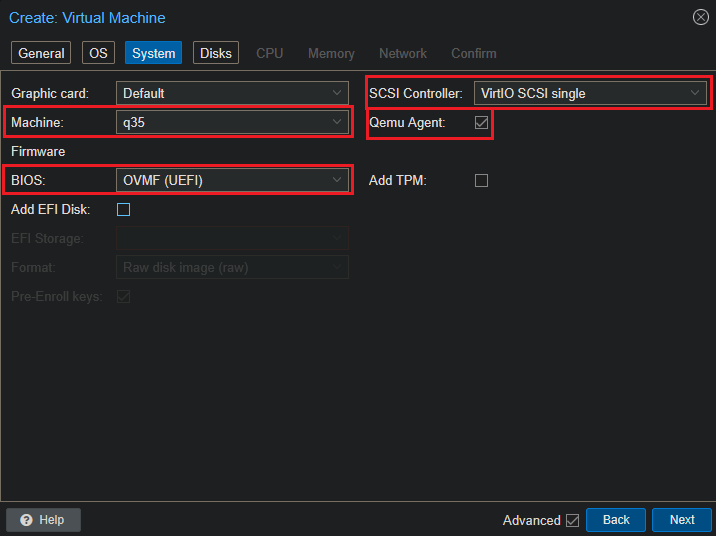

System:

- Graphics card:

Default; - BIOS:

OVMF(UEFI); - Machine:

q35; - SCSI Controller:

VirtIO SCSI single; - (Optionally) enable Qemu Agent in Options after VM creation (see below):

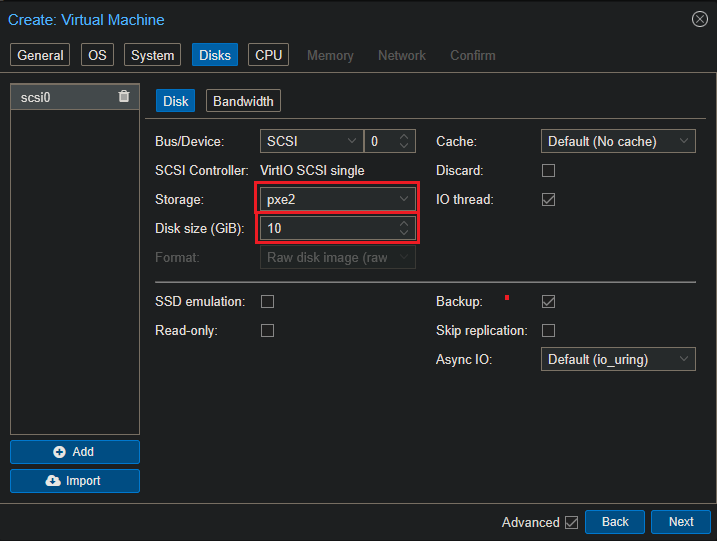

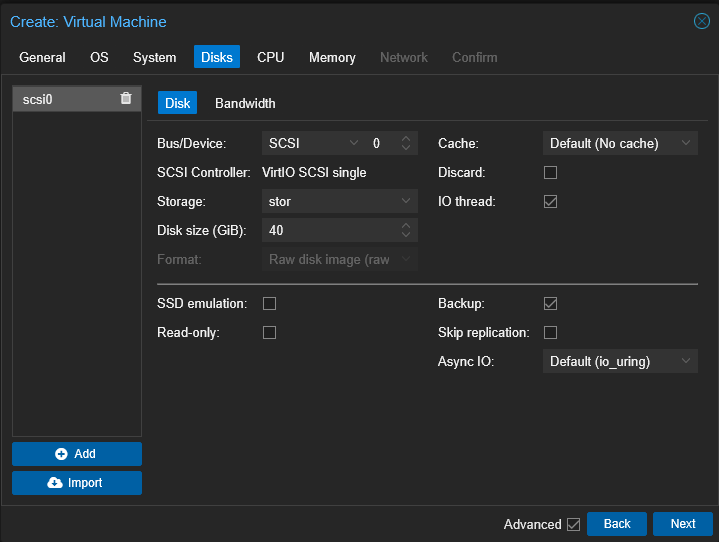

Disks:

- Bus/Device:

SCSI; - SCSI Controller:

VirtIO SCSI single; - Storage: your

LVM-Thin/Directory; - Size: 20–40 GB (minimum 10-15 GB for testing);

- Discard (TRIM): enable on a

thin-pool:

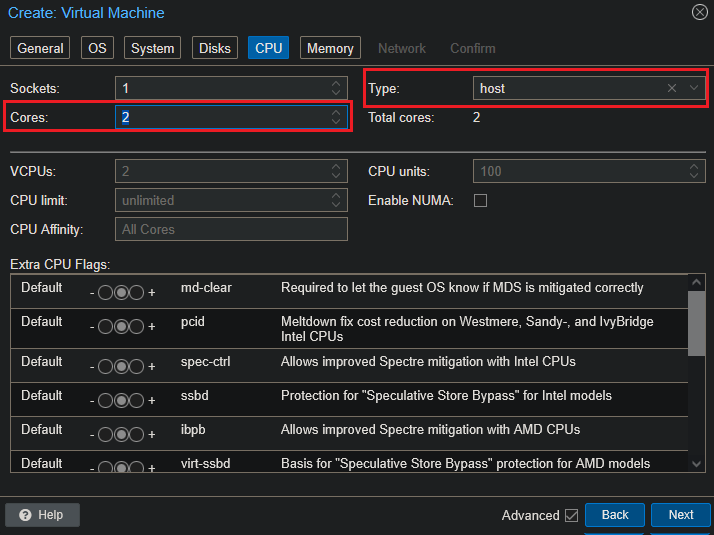

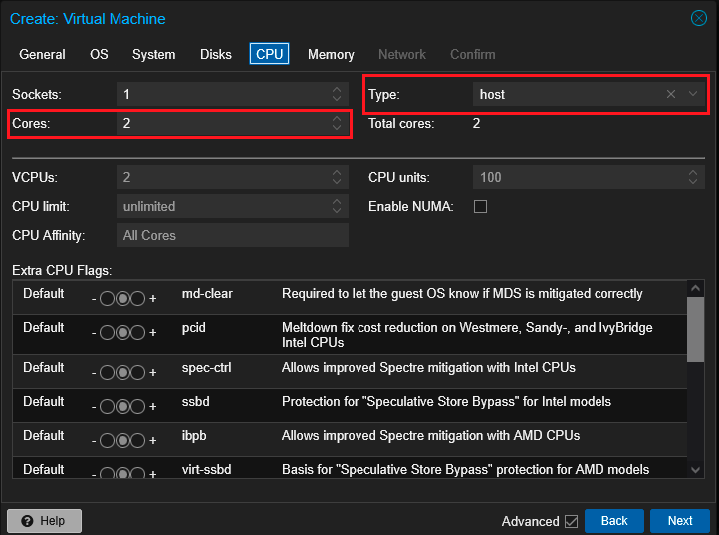

CPU:

- Sockets: 1;

- Cores: 2 (according to your VPS);

- Type:

host(best performance):

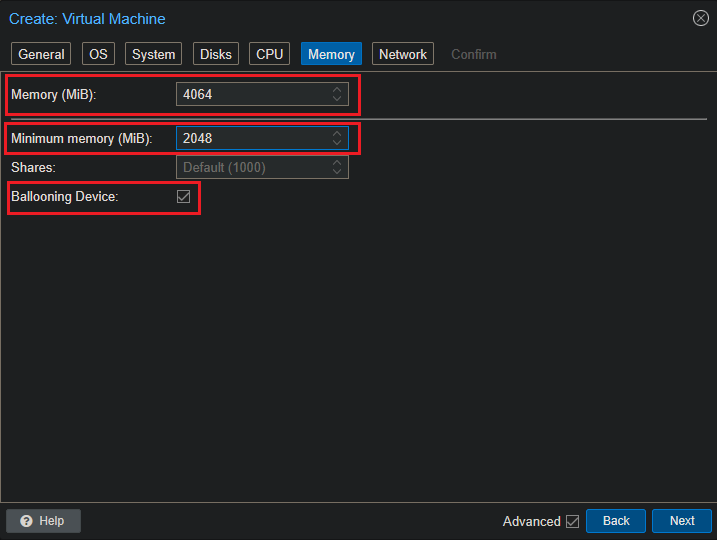

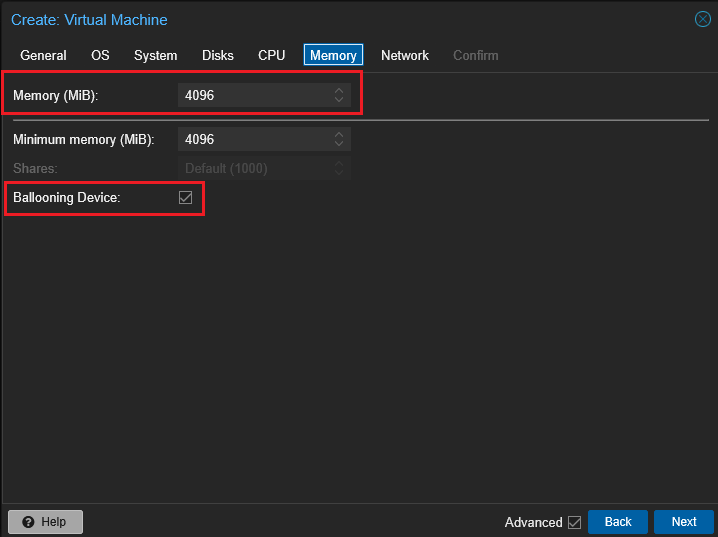

Memory:

- 2048–4096 MB. You can enable Ballooning (e.g.,

Min 1024,Max 4096):

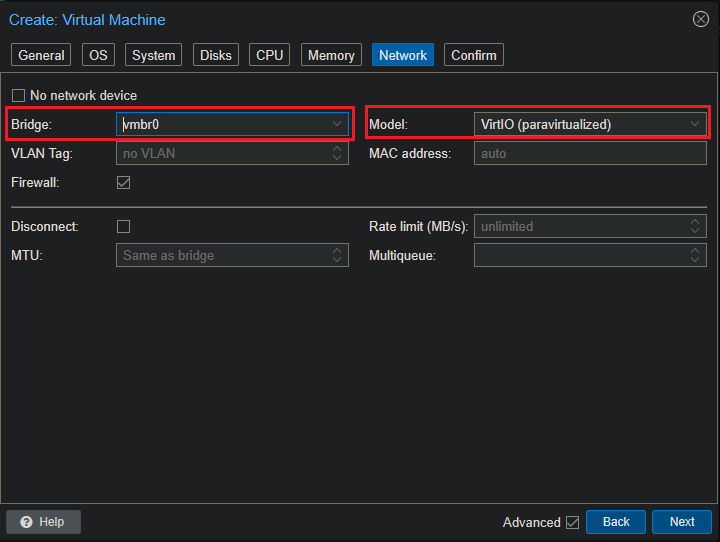

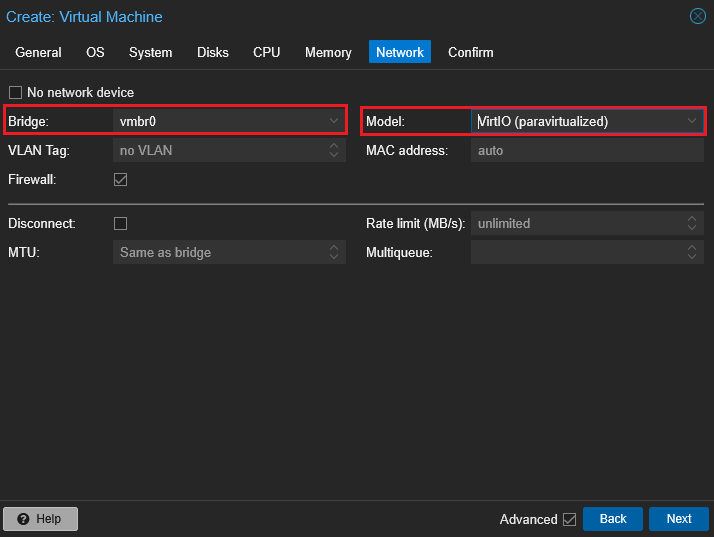

Network:

- Model:

VirtIO(paravirtualized); - Bridge:

vmbr0; - If VLAN is needed:

VLAN Tag:

Confirm: check settings, mark Start after created and click Finish:

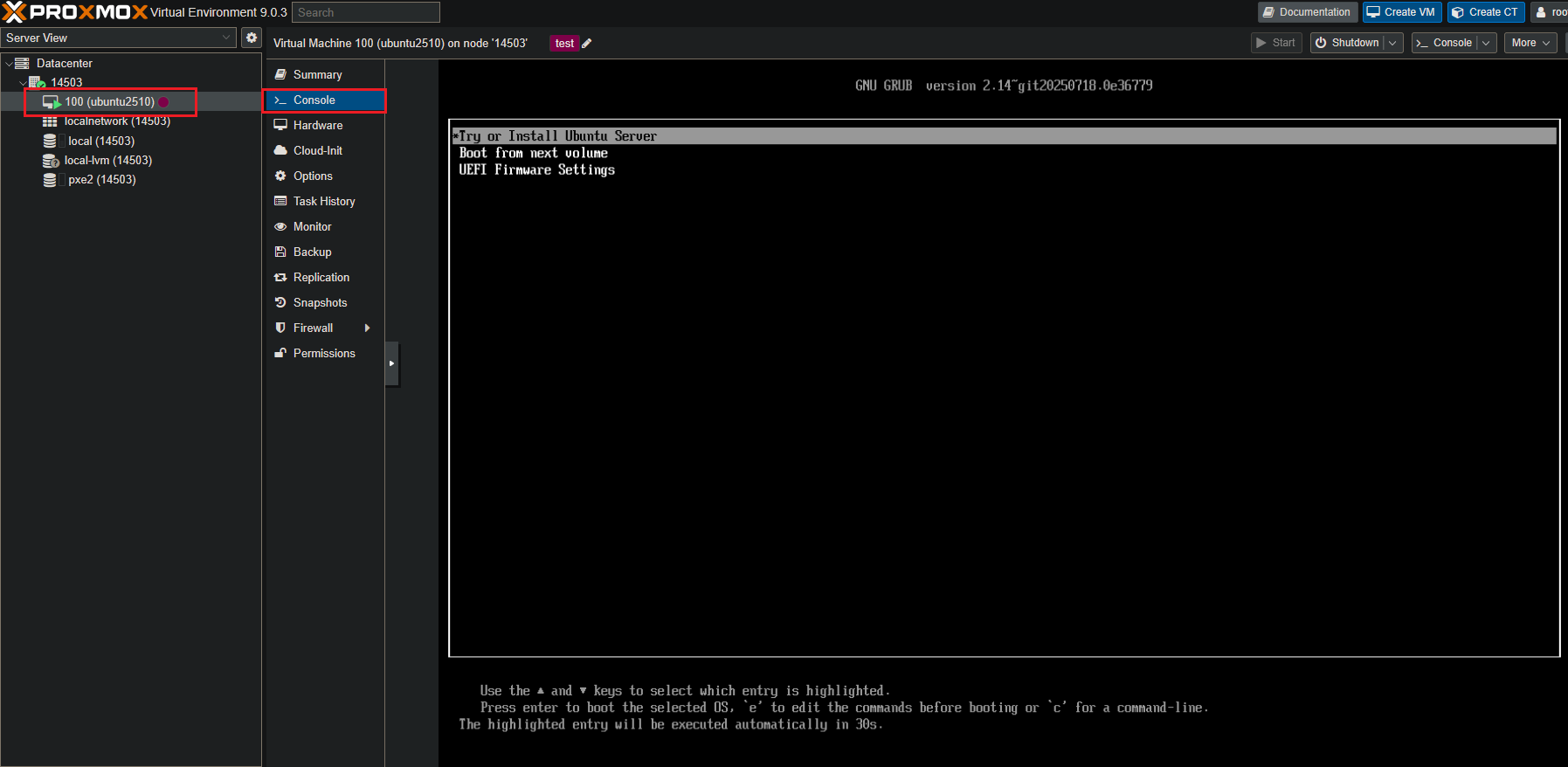

OS Installation:¶

-

Start the VM > Console (

noVNC) >Try or Install Ubuntu:

-

Installer:

- DHCP/static IP as needed;

- Disk:

Use entire disk; - Profile: user/password;

- OpenSSH server: enable.

-

Reboot and log in via console/SSH.

Post-install:¶

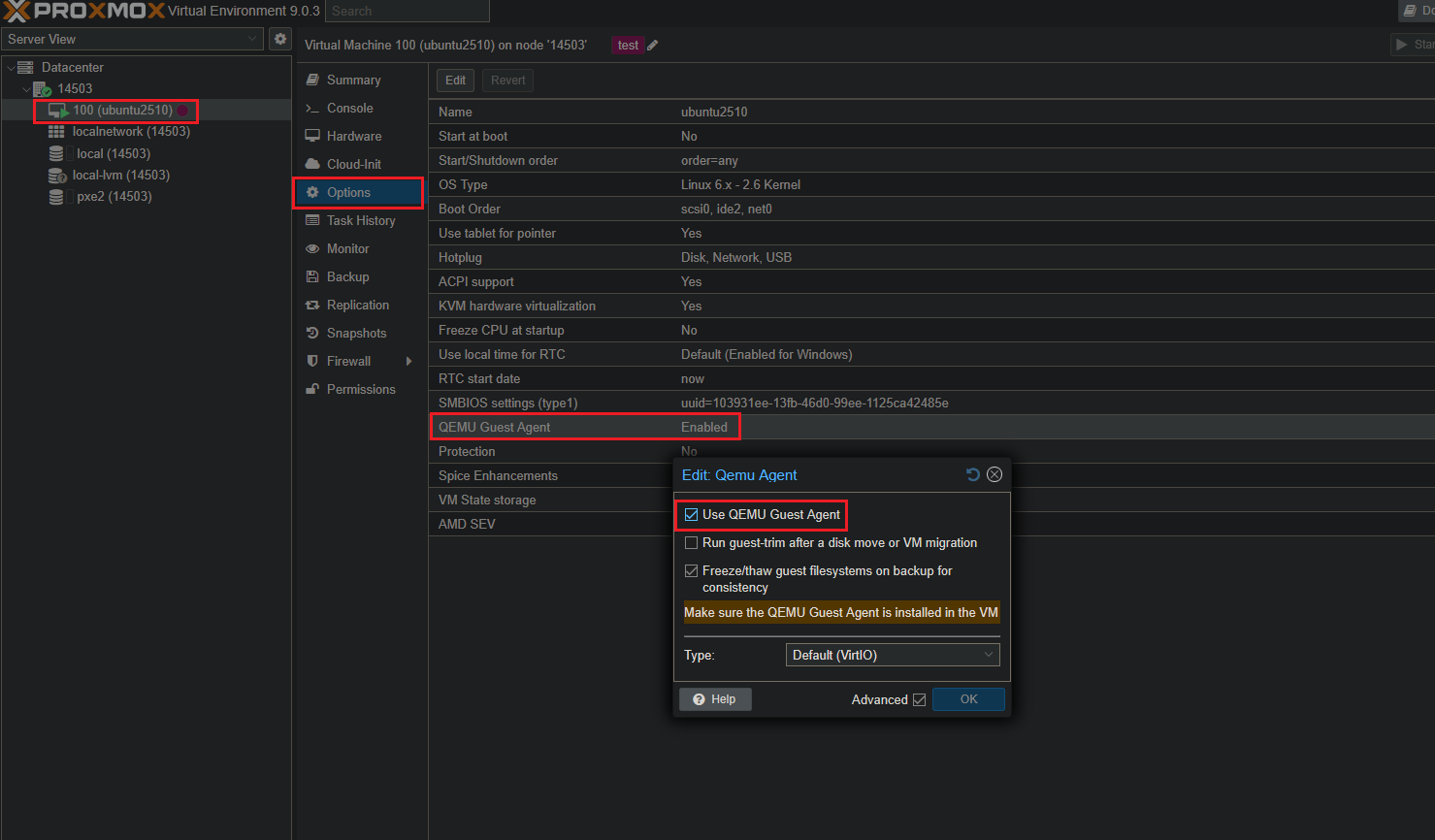

Then in Proxmox: VM > Options > Qemu Agent =Enabled:

Boot Order: if booting from ISO - Options > Boot Order > move scsi0 above cdrom.

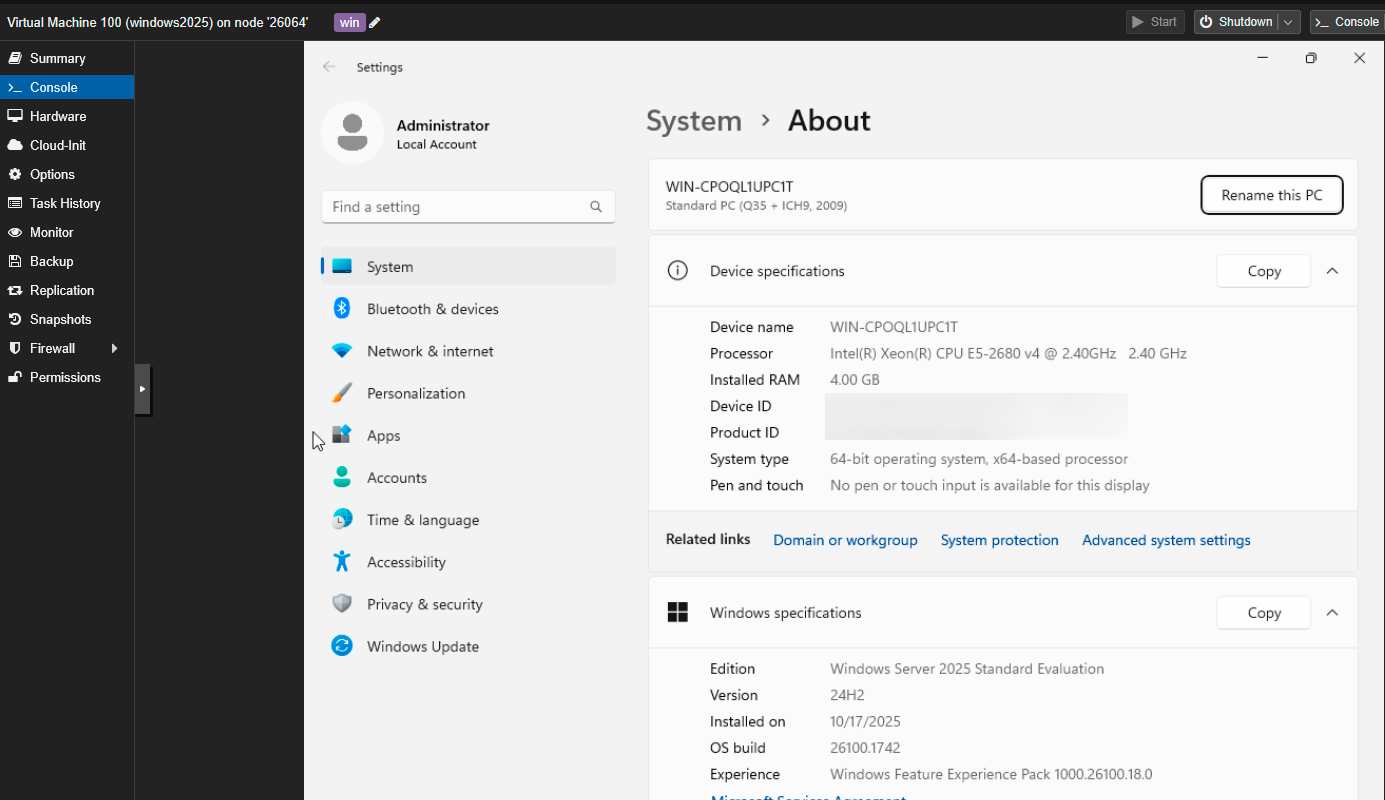

Windows Installation (for More Powerful Nodes)¶

Suitable for nodes with ≥4 vCPU/8 GB RAM. On weak VPS, Windows may work unstably.

-

ISO: Download the ISOs of Windows Server (2019/2022/2025) and

virtio-win.iso(drivers) in Storage > Content:

-

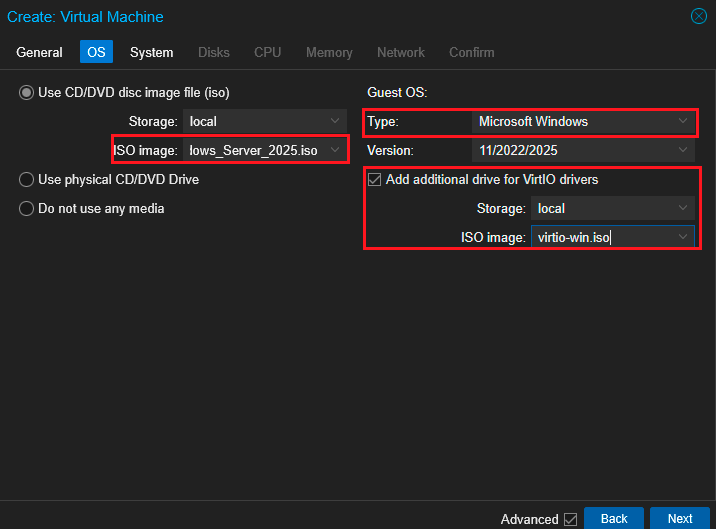

Create VM> OS:Microsoft Windows, select the installation ISO image. The optionAdd additional drive for VirtIO driversallows you to add a second CD with drivers:

-

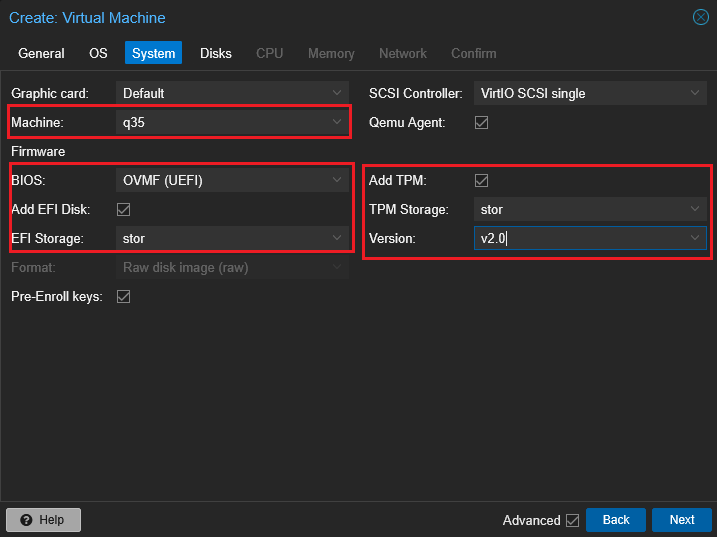

System:

BIOS OVMF(UEFI);- Machine:

q35; - If necessary, enable

Add EFI DiskandAdd TPM(for new Windows versions). If it doesn't start - trySeaBIOSand disableEFI/TPM:

- Machine:

-

Disks:

- Bus:

SCSI; - Controller:

VirtIO SCSI; - Size: 40–80 GB;

- Enable IO Threads:

- Bus:

-

CPU: 2–4 vCPUs;

- Type:

host:

- Type:

-

Memory: 4–8 GB:

-

Network:

Model VirtIO(paravirtualized),Bridge vmbr0:

-

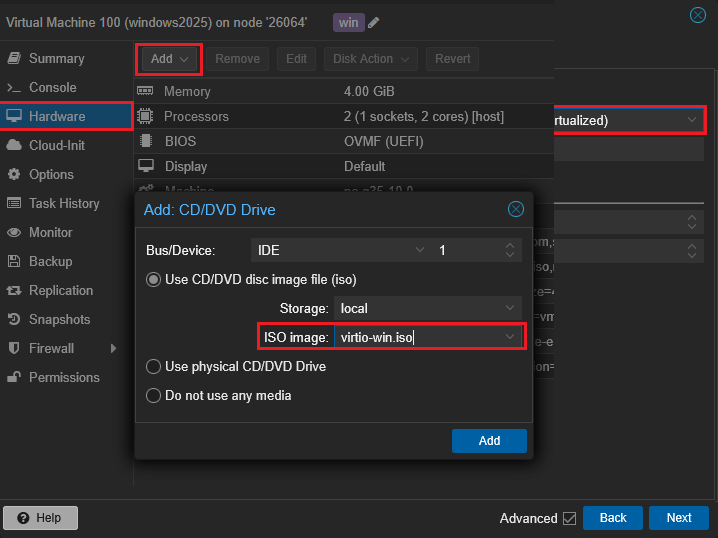

Confirm: Complete VM creation by clicking

Create, then in Hardware > CD/DVD Drive, attach a second ISO -virtio-win.iso:

-

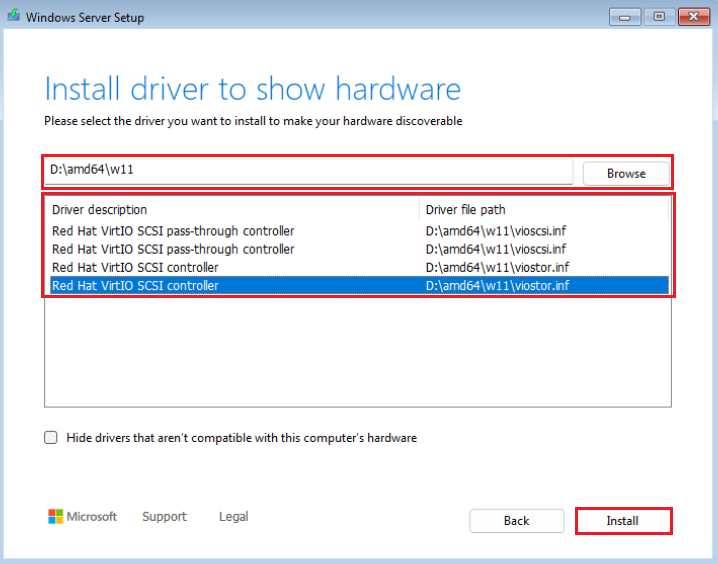

Windows Installer: On the disk selection step, click

Load Driver> specify the CD with VirtIO (vioscsi/viostor). After installation - set network drivers in Device Manager (NetKVM):

-

Guest Agent (optional): Install

Qemu Guest Agentfor Windows usingvirtio-win ISO:

Troubleshooting Windows:¶

- Black screen/doesn't boot: Change OVMF > SeaBIOS, disable EFI/TPM.

- No network: Ensure NIC = VirtIO and NetKVM driver is installed.

- Disk slowdowns: Make sure the disk = SCSI + virtio driver.

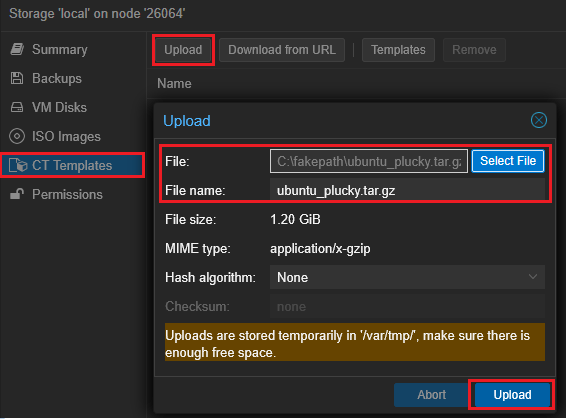

LXC Containers: Quick Start¶

Pre-made templates with minimal software are available in the template storage.

-

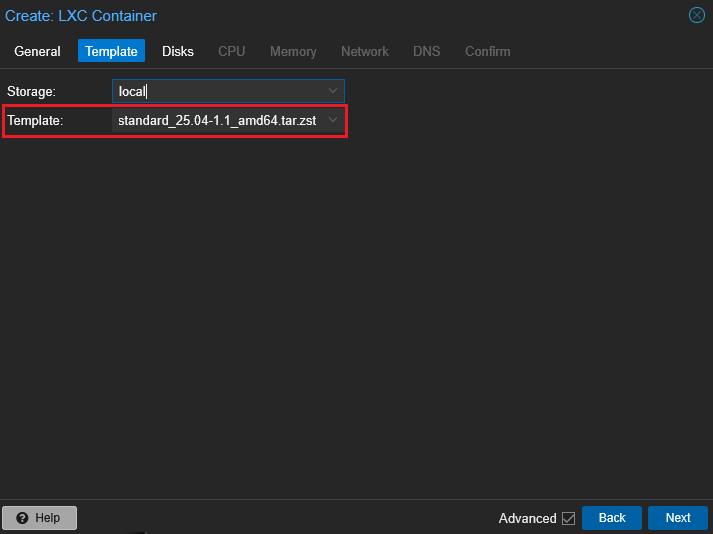

Datacenter > Storage > (select

storagewith typeTemplates)> **Content** > **Templates**. Download, for example:ubuntu-25.04-standard_*.tar.zst` or another needed template:

-

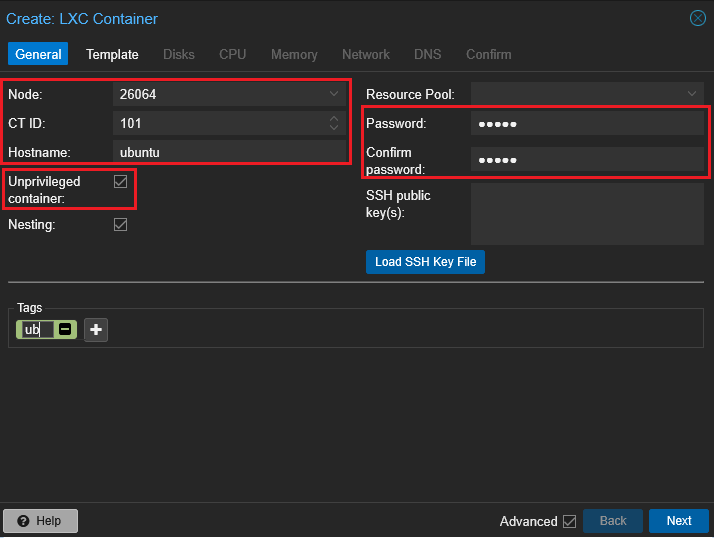

Click

Create CT:- General: Specify ID/Name,

Unprivileged container=Enabled(safer by default). Set passwordrootor SSH key.

- Template: Select the downloaded template.

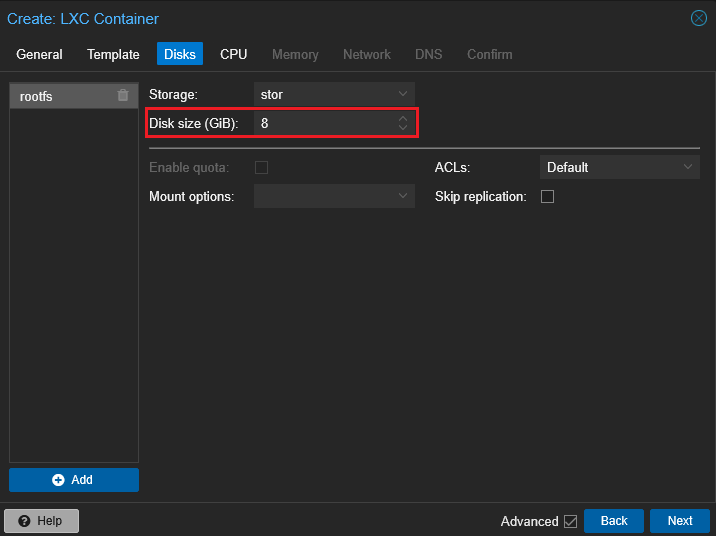

- Disks: Storage/Size (for example, 8–20 GB).

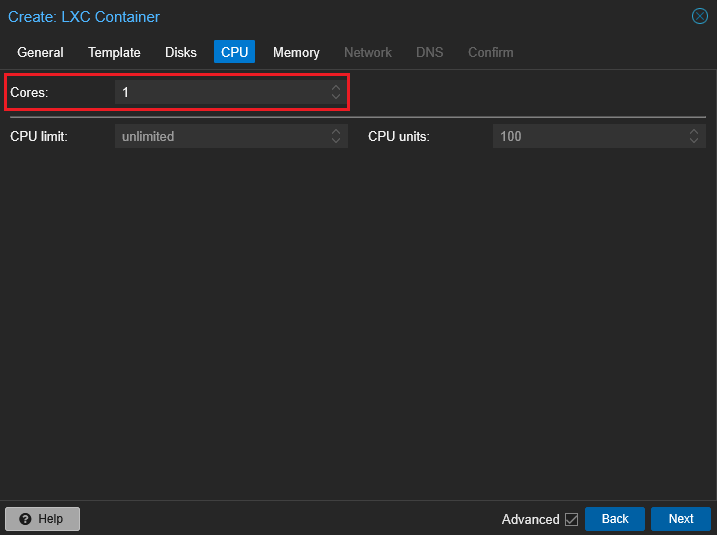

- CPU/RAM: According to the task (for example, 1 vCPU, 1–2 GB RAM).

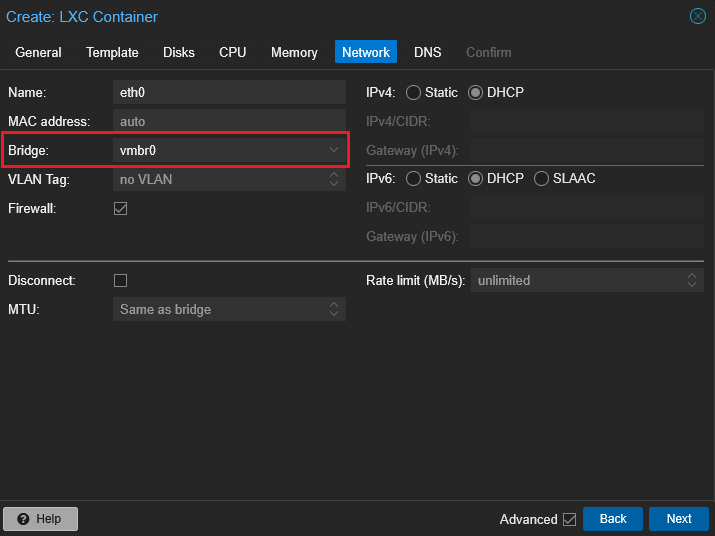

- Network:

Bridge vmbr0, IPv4 =DHCP(or Static if needed). VLAN Tag as necessary.

- General: Specify ID/Name,

Tip for Network: If you are using NAT on vmbr1, then set it and specify the static IP.

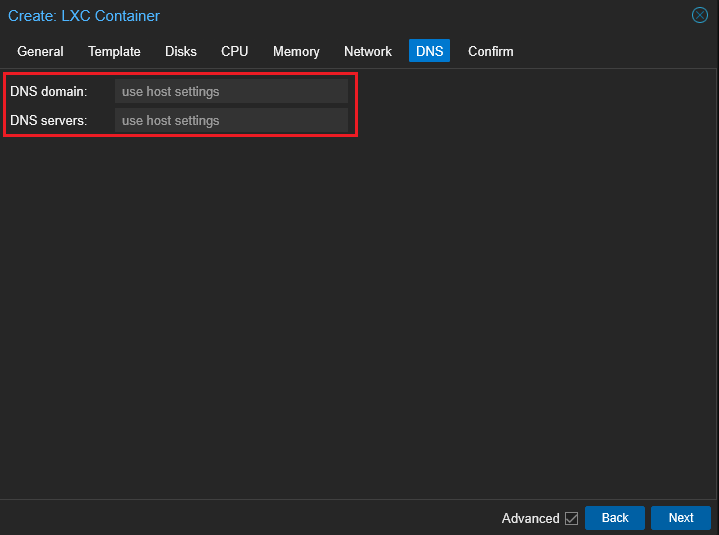

- **DNS**: Default from the host or your own.

- **Features**: Optionally enable `nesting`, `fuse`, `keyctl` (depends on applications in the container).

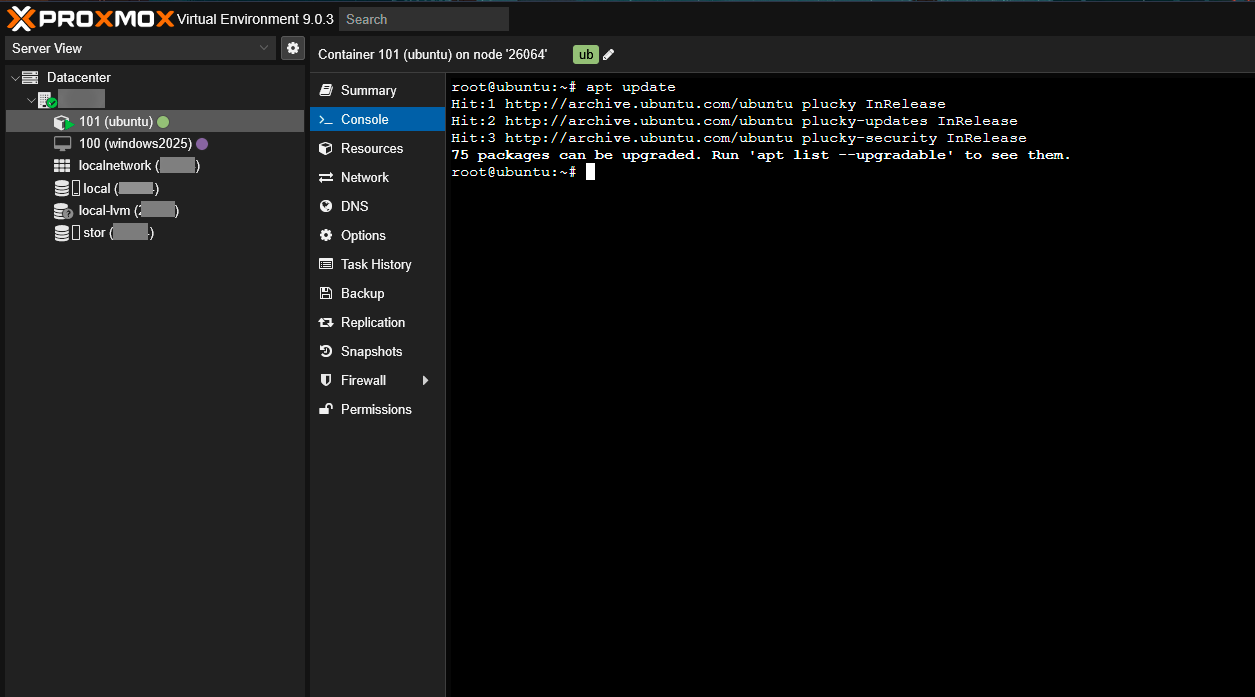

Start at boot/Start after created: as desired.- After starting: log in via SSH and install software from the template or packages:

In LXC, Qemu Guest Agent is not needed. Mounting host directories is done through MP (Mount points).

Typical VM Profiles¶

- Ubuntu/Debian (Web/DB/Utility): SCSI + VirtIO, UEFI (OVMF), 1–2 vCPUs, 2–4 GB RAM, disk 20–60 GB; enable Qemu Guest Agent.

- Lightweight Services (DNS/DHCP/Proxy): 1 vCPU, 1–2 GB RAM, disk 8–20 GB.

- Container Hosts (Docker/Podman): 2–4 vCPUs, 4–8 GB RAM; separate disk/pool for data.

Alternative to ISO: You can use Ubuntu 25.10 Cloud-Init images for quick cloning with auto-configured network/SSH. Suitable if you plan to have many similar VMs.

Connecting VMs and LXC in One Network¶

Attention

If you are using Proxmox on a VPS, you will only get one external IP and MAC without additional IPs, VLANs, and DHCP, so:

- The public IP must be assigned only to the vmbr0 bridge, which bridges the single interface (e.g., ens18).

- VMs/CTs cannot obtain their own public IPs; they must use an internal subnet (e.g., 10.10.0.0/24) and reach the internet via NAT on the host.

- The examples below with addresses 10.10.0.x refer to internal networks (vmbr1), not the external bridge vmbr0.

Basic Variant (One Subnet):¶

- Ensure all VMs/containers have Bridge =

vmbr0(orvmbr1). - If using a DHCP network - addresses are assigned automatically, if static - specify IPs in one subnet (for example,

10.10.0.2/24,10.10.0.3/24) and common gateway10.10.0.1. - Optionally. VLAN: Specify VLAN Tag in the network card settings of VMs/CT and ensure that the switch's uplink allows this VLAN.

- Inside the OS, check that the local firewall does not block ICMP/SSH/HTTP.

- Test: From Ubuntu VM

ping <IP-LXC>and vice versa;ip route,traceroutewill help with issues.

When Different Subnets:¶

- Proxmox itself does not route between bridges. A router (separate VM with Linux/pfSense) or NAT on the host is needed.

- Simple NAT on the host (example):

Enable forwarding:

NAT fromvmbr1 to internet via vmbr0: For persistence, add rules in /etc/network/if-up.d/ or use nftables: EXT_IF=vmbr0

iptables -t nat -C POSTROUTING -s 10.10.0.0/24 -o $EXT_IF -j MASQUERADE 2>/dev/null || \

iptables -t nat -A POSTROUTING -s 10.10.0.0/24 -o $EXT_IF -j MASQUERADE

iptables -C FORWARD -i vmbr1 -o $EXT_IF -j ACCEPT 2>/dev/null || \

iptables -A FORWARD -i vmbr1 -o $EXT_IF -j ACCEPT

iptables -C FORWARD -i $EXT_IF -o vmbr1 -m state --state RELATED,ESTABLISHED -j ACCEPT 2>/dev/null || \

iptables -A FORWARD -i $EXT_IF -o vmbr1 -m state --state RELATED,ESTABLISHED -j ACCEPT

Attention

On a VPS with a single IP, all VMs/CTs are typically located in an internal subnet (e.g., 10.10.0.0/24) on the vmbr1 bridge and exit to the internet via NAT on the host using the sole external IP on vmbr0.

Note

Using NAT is suitable for merging LXC and ISO‑installed OSes into a single subnet.

Backups and Templates¶

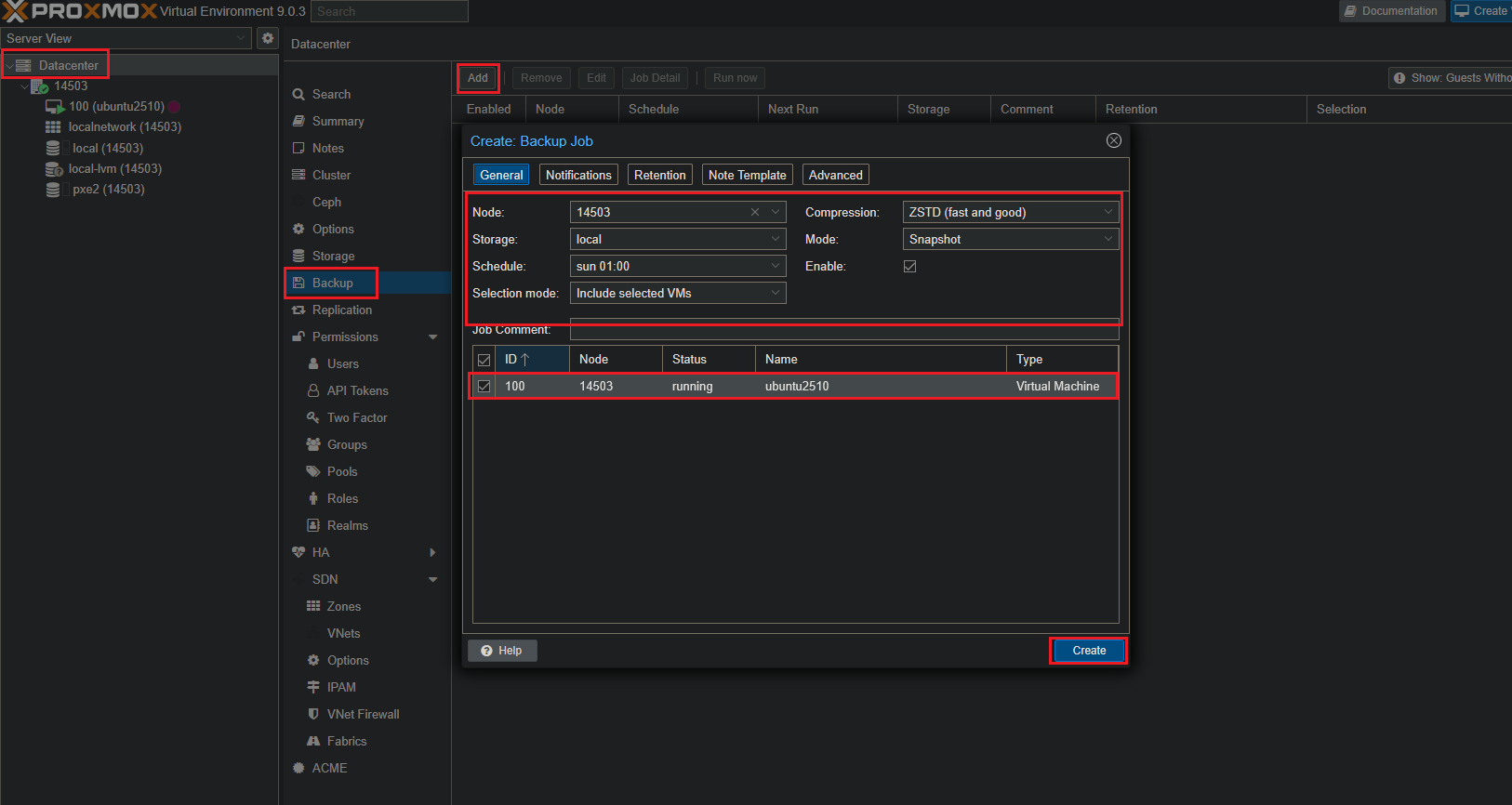

-

Backup: Datacenter > Backup or Node > Backup - set up

vzdumpschedule (storage, time, snapshot/stop mode):

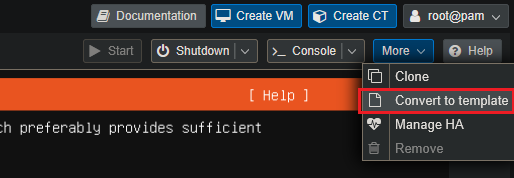

-

VM Template: After basic VM setup >

Convert to Template. Creating new VMs throughClonesaves time and eliminates errors:

Common Problems and Solutions¶

"Web Interface Disappeared" (GUI Not Opening)¶

Check if the node is accessible via SSH. On the node, execute:

Soft restart of services: If packages were updated - complete apt and resolve hanging processes (carefully), check available spacedf -h. Lost Network After Bridge Editing¶

Connect via console (through provider/VNC/IPMI). Check /etc/network/interfaces and apply:

bridge-ports is the correct physical interface. VM Does Not Connect to Internet¶

- Ensure inside the VM correct IPs/mask/gateway/DNS are specified.

- Check that the Bridge of the VM's network adapter is - vmbr0 (or nat/vmbr1).

- If VLAN is used - specify VLAN Tag in NIC VM settings (Hardware > Network Device > VLAN Tag), and on the switch uplink allow this VLAN.

ISO Does Not Boot / Installer Not Visible¶

- Check Boot Order (Options > Boot Order) and that the correct ISO is connected.

- For UEFI, check if Secure Boot in the guest OS is not enabled if the ISO does not support it.

High Load/Disk "Clog"¶

- Use VirtIO SCSI and enable IO Threads for intensive disk use.

- Do not store backups on the same thin-pool that holds operational disks - better have a separate storage.

"Disconnected" Webcam/USB Device in VM¶

- For USB passthrough, use Hardware > USB Device. If the device stops responding -

Stop/StartVM or reconnect USB on host. Sometimes disablingUse USB3helps with compatibility.

Updates and Reboot¶

Update during "windows" and make a backup before major upgrades.Diagnostics: Cheat Sheet¶

Node network:

Proxmox services: Disk space: Storages: VM device: Quick VM restart:Mini-FAQ¶

Q: Can vmbr0 be renamed? A: Yes, but it's not recommended on a production node - it's easier to leave vmbr0 and add additional bridges (vmbr1) as needed.

Q: Where do ISOs lie by default? A: In the local storage: /var/lib/vz/template/iso.

Q: What distinguishes local from local-lvm? A: Local - a regular directory for ISO, container templates, etc. local-lvm - LVM-Thin for VM/container disks with snapshots.

Q: How to quickly clone a VM? A: Turn an exemplary VM into a Template, then Clone > Full/Linked.

Q: How to safely scale CPU/RAM of a VM? A: Shut down the VM and change resources; for Linux part parameters can be changed "on the fly", but better planned.

Readiness Checklist for System¶

- Access to

https://<server_IP>:8006exists; vmbr0is set up and internet from node;- ISOs are loaded into storage;

- First VM is created and installed;

- Qemu Guest Agent is enabled;

- Backup is configured (

vzdump schedule); - Updates are checked.

Ordering a Server with Proxmox 9 using API¶

To install this software using the API, follow these instructions.

Some of the content on this page was created or translated using AI.